Introduction

You're at a conference and need to use the network for an instant messaging session with a few of your colleagues. You type in the URL, hit enter, and are promptly told you must wait because the website is hosted by this server managed by one of your company's third-party suppliers.

As you look around, you find that everyone has this issue. This inefficient traffic management, load balancing, is designed to optimize traffic distribution over various backend servers.

Load balancing, essential for software and web developers, is a crucial technique for optimizing the performance of applications and websites. By distributing traffic across multiple servers, load balancing helps prevent any single server from becoming overwhelmed and improves responsiveness.

This introduction will explore load balancing, how the main techniques work, its benefits, and the types of load balancers available.

Definging Load Balancing

What is Load Balancing?

Load balancing is spreading tasks process across several computers or parts of a system to ensure everything runs efficiently, preventing any single part from getting overloaded.

Load balancing helps us use resources wisely, speed up processes, and respond faster. This is typically achieved by distributing processing tasks across servers that serve similar functions. At the leadership level, these decisions often intersect with vendor strategy, especially when partnering with custom web application development companies that need to align performance goals, SLAs, and deployment cadence with your platform constraints. The added complexity increases design problems for computer networks and data centers.

Load balancing improves the efficiency of computing systems by distributing work among multiple computers to reduce bottlenecks. Load balancing can be implemented at many levels in a computer system, including on individual server modules, the operating system level (software load-balancing), or the network layer (hardware load-balancing).

Load balancers are often deployed in large-scale production environments like e-commerce websites and cloud computing applications.

The purpose of load balancing is to ensure that each user's request receives equal treatment and service from the system, regardless of how many users access it at any given time. If too many users attempt to access your website or service, the server can handle them without slowing down or shutting down altogether.

How does load balancing work?

The load balancer can be configured to distribute requests among multiple servers. It is also possible for a load balancer to redirect users from one server to another, depending on what kind of application they are accessing and how much traffic they generate.

- Distributing incoming network traffic: Load balancers act as a "traffic cop," distributing client requests across all servers capable of fulfilling those requests to maximize speed and capacity utilization.

- Health checks: Regularly monitor servers to ensure they're available and perform optimally. Failover: What happens when a server becomes unresponsive?

- Session persistence: Ensuring user's requests are sent to the same server they initially connected to. Importance in web applications: How session persistence impacts user experience and application functionality.

Types of Load Balancers

There are two main types of load balancers:

Hardware load balancers:

These are physical devices that sit between web servers and users. They can scale to handle large amounts of traffic and can be configured to ensure that all requests get sent to an available server, which is critical for ensuring reliability and uptime.

Hardware load balancers can also be configured with multiple virtual IP addresses (VIPs), allowing the device to appear as multiple servers in clients' eyes.

Some of the most popular hardware load balancers include: 1. Nexus 5000 Series Switch 2. HPE Load Balancer 3. Cisco ASR1000 Series Router

- Definition: Physical devices dedicated to managing and distributing network traffic.

- Advantages: High performance, reliability, and configurability.

- Limitations: Scalability issues, upfront costs, and potential for single points of failure.

Software load balancers

These virtual servers run on existing servers and use shared resources to route traffic. Software-based load balancing is generally less expensive than hardware-based solutions, but they require additional configuration on each server being monitored by the load balancer.

- Definition: Virtual tools or applications managing application traffic distribution.

- Advantages: Flexibility, scalability, and adaptability to different environments.

- Limitations: Depending on the underlying infrastructure, this may require a more intricate setup and possible performance issues.

Hardware load balancers vs. Software load balancers

1. Upfront costs vs. operational costs

The upfront costs of a hardware-based load balancer are generally higher than that of software-based load balancers. However, once the infrastructure is set up and configured, it requires less maintenance and ongoing costs. Hardware-based load balancers are generally more flexible and can be used in various environments.

Software-based load balancers are cheaper but require more maintenance and ongoing costs. They also have a lower performance ceiling than hardware-based load balancers.

2. Hardware limitations vs. software scalability

Hardware-based load balancers may have limitations that software-based load balancers don’t. For example, hardware may not support specific protocols or encryption levels. Also, the number of users and connections that a hardware-based load balancer can handle may be limited by the capacity of its underlying infrastructure.

3. Installation of physical devices vs. deploying virtual solutions.

Virtual load balancers are more convenient to install, requiring no physical hardware. This can be a significant advantage if you need to quickly scale up or down capacity and don’t have time to deploy new devices. However, it’s important to note that physical load balancers may offer better performance than virtual ones due to their higher throughput speeds.

4. Hardware specs vs. software adaptability

Hardware-based load balancers are more adaptable than software-centric systems because they can be tailored to your needs. This could mean you can get a better performance out of your system if it is explicitly designed for your application or workload requirements. However, virtualized solutions may offer better performance overall since they don’t have as many limitations on throughput speeds.

Load Balancing Algorithms

Load-balancing algorithms may be used to achieve several objectives. These include:

- Balancing the load across multiple servers to increase throughput and decrease response time.

- Reducing the response time for a single user from that user's perspective by spreading out the requests for each resource among multiple servers, thus reducing contention for resources on any given server.

- Optimizing resource utilization by distributing load between the servers to maximize throughput or minimize response time (for example, distributing CPU-intensive tasks across CPUs).

- Providing redundancy by distributing requests across multiple servers in case one or more fail.

- Allocating work with certain characteristics (such as CPU time) to specific servers. This can be done according to fixed criteria or on-demand based on perceived needs at runtime.

There are several different load-balancing algorithms, but the most common ones are: * Round-robin: The robin method sends each client request to the next available server in a round-robin fashion. The idea behind this method is that if one server is busy at any given time, another will be available to handle requests until it gets busy again. * Least connections: Least connection method keeps track of the number of connections each server has open and sends new requests to servers with the least open connections. Least connections also consider other factors, such as response time and session persistence, to determine which server should receive a new request. * IP hash: This method assigns a unique identifier to each client request and distributes the requests among available servers based on their associated identifiers. This method is proper when you have a relatively small number of clients that generate large amounts of application traffic and must route requests to distribute the load across multiple servers. * Server load: This method is the most commonly used, and it measures server load to route requests. A client’s request is sent to the server with the least load on its CPU and memory. This method can be problematic when clients are distributed across multiple locations because one server may be more heavily loaded than another even though they handle requests from the same client IP address space.

Factors Influencing Algorithm Choice

The choice of an algorithm is influenced by several factors:

- The size of the problem: The algorithm must be able to solve a problem of a given size and complexity.

- The quality of the solution: The algorithm must produce a solution that is as good as possible, given the constraints of cost, time, and accuracy.

- The resources available: The algorithm must be feasible with respect to available memory, processing time, and other capabilities of the computer system on which it will be executed.

- The nature of the problem: Different kinds of problems require different kinds of algorithms; each kind has its own unique characteristics and properties that must be understood before any attempt can be made to solve it efficiently using an optimal method.

- Computational complexity: Computational complexity is related to the time taken by an algorithm to complete its task. Some algorithms are faster than others when solving a given problem. In other words, it takes less time for some algorithms as compared to others to solve a similar type of problem.

Benefits of Load Balancing

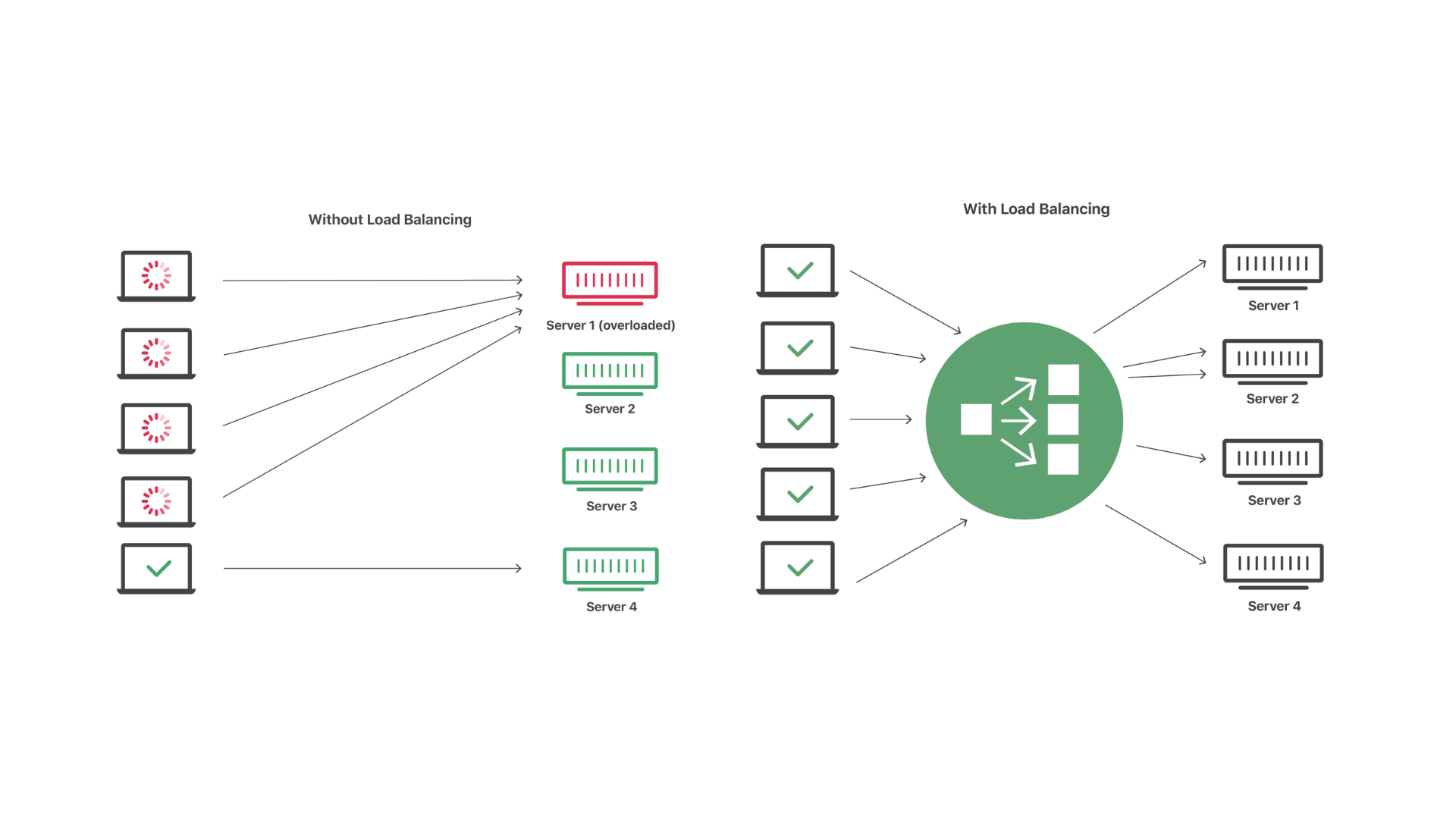

With and without load balancing. Source

The benefits of load balancing include:

Scalability

By using the load balancer, you can scale your website to handle a large number of visitors. The load balancer will distribute the requests and increase server capacity as needed. This is especially useful when your site receives much client traffic and needs more resources to handle all requests.

You can add or remove servers from the system to meet your changing needs, and the load balancer will quickly adapt to the new configuration.

Optimized Resource Utilization

The load balancer will ensure that each server is fully utilized, which can help you save money on hardware costs. Additionally, if one of your servers becomes overloaded and begins to slow down or fail, the load balancer will detect this problem and move requests away so that no visitors are affected.

Ease of Use Many web hosting providers offer various tools for managing your website, including some that allow you to configure load-balancing rules easily.

Enhanced Performance

Load balancers distribute the requests across multiple servers to handle more requests simultaneously than a single server could. This means that numerous backend servers might handle a single user request, which gives you more throughput (the number of requests processed per second).

This can translate into better performance for users because their requests will be handled faster, especially when there are many concurrent requests by users accessing an application or website.

High availability

Load balancers provide high availability by ensuring your application is always available to users. If one of your cluster's servers goes offline, another server will still handle requests. This means that downtime is reduced or eliminated, and you can avoid service outages due to system failures.

The benefit of high availability is that it allows you to avoid downtime, which can be costly. If your application goes offline and users cannot access it, they may become frustrated and leave. Sometimes, they might even decide not to return if they don’t feel their needs are being met.

Redundancy

Redundancy is another benefit of a clustered application. If one server fails, the other can take over and continue handling requests. If one server goes offline, the others will continue providing service. This reduces the likelihood that you’ll need to spend time rebuilding your application or replacing hardware components should something go wrong.

Advanced Load Balancing Considerations

The following are some important considerations for load balancing:

The first thing to consider when designing your load-balancing strategy is security. Make your web applications protected from unauthorized access and attacks. There are many different ways to protect your applications, ranging from firewalls to SSL certificates, but one of the most effective methods is to use a reverse proxy.

A reverse proxy like HAProxy or Nginx can be placed in front of your web applications and configured to use SSL encryption and authentication headers to ensure that only authorized users can access them. This ensures that hackers or other malicious users cannot access your applications through a direct request.

Security measures include enabling firewall rules at the load balancer level so that only authorized applications can access it; helps protect against DoS attacks as well as other unauthorized access attempts such as password cracking or MITM attacks on SSL/TLS connections because these rules will block all traffic except for authorized applications.

In addition to security measures, ensuring your application is always available by providing enough servers at each location in case one goes down is essential. Global server load balancing (GSLB) is the easiest way to accomplish this. GSLB allows you to place multiple servers in multiple locations worldwide and route traffic through them based on their location and availability.

If one location fails, traffic will be routed to another still up-and-running location. This ensures your application will always be available to users, even with issues with one or more servers.

It’s also essential to measure performance across all layers in your application stack, including frontend HTTP requests, database queries, and backend serv

Conclusion

Hopefully, you found the information in this guide educational and practical. Load balancing is a powerful tool that's been around for over three decades, showing no sign of slowing down. It's a powerful tool well worth the price of admission.

The internet would not have been possible without load balancing. Nowadays, almost everything we do involves the internet; therefore, load balancing is essential to keeping our interactions fast and seamless.

Load balancing has proven to be a crucial resource in optimizing traffic distribution and will be vital in developing new technologies that depend on it.

If you want to create a reliable, high-performance network that can handle even the highest demand for internet traffic, load balancing is one of the most vital tools in your arsenal.

Numerous use cases exist for load-balancing software and the excellent physical hardware developed over the years. If you want an easy way to optimize web traffic between multiple servers, then load balancing is the way to go!