Introduction - visual regressions as systemic risk

Visual regressions rarely break builds. They break trust.

A button shifts by two pixels. A font weight changes under a specific viewport. A gradient renders differently after a dependency update. The application functions. Tests pass. Users notice.

The cost accumulates silently across releases. Brand consistency erodes. Design confidence degrades. Support tickets arrive without clear correlation to recent changes. By the time a visual regression is acknowledged in production, the damage has already occurred.

At scale, this problem is structural. Multi-brand products, white-label platforms, and shared component libraries multiply the visual surface area. A single change in a design token or layout primitive can propagate across dozens of branded contexts.

Visual regression testing is not about pixel perfection. It is about maintaining predictable visual integrity under continuous change.

Multi-Brand Reality and Intentional Visual Divergence

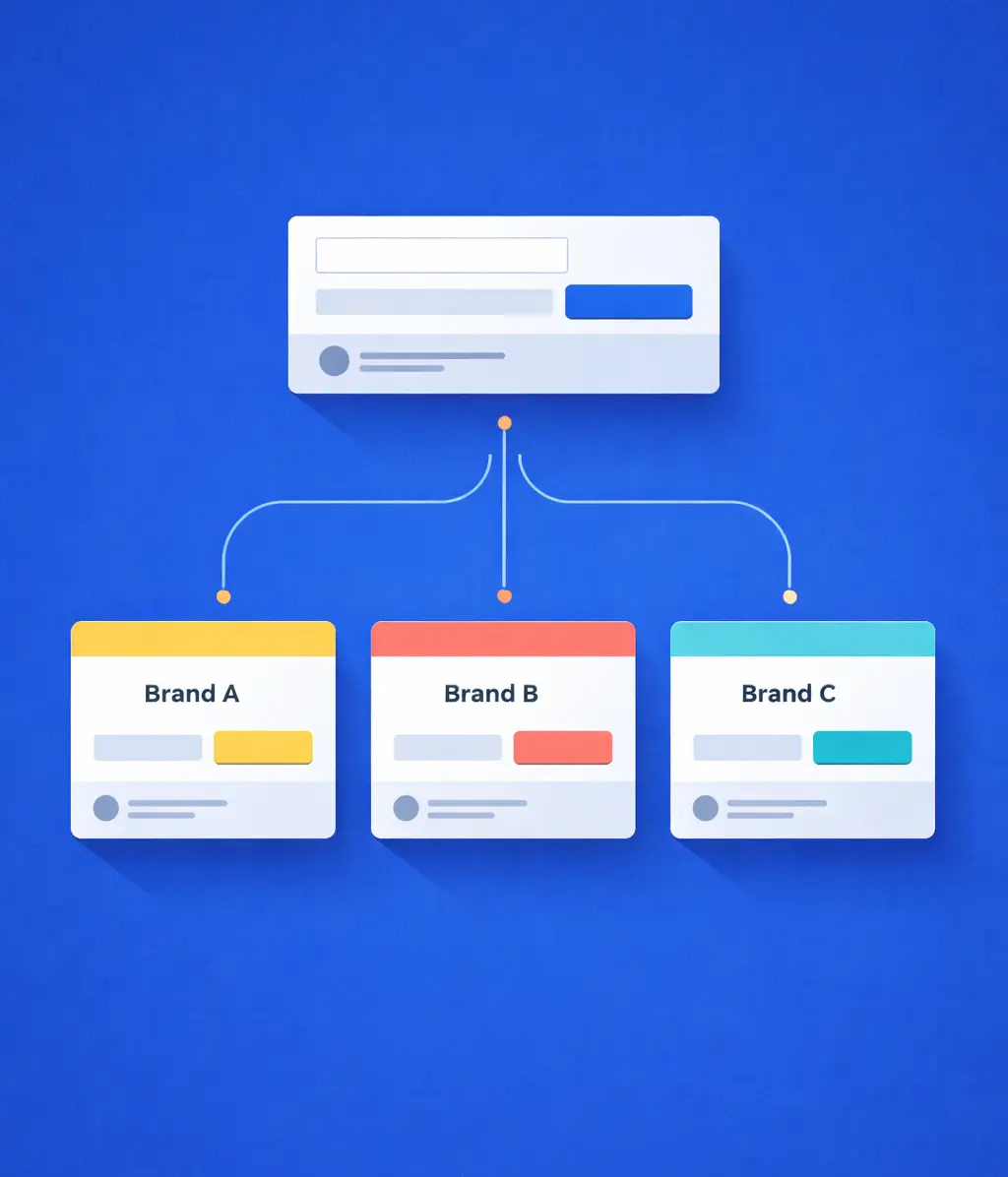

In multi-brand systems, visual divergence is intentional. Themes differ in color systems, typography, spacing rules, and component emphasis. The same component may be correct across all brands while appearing radically different at the pixel level.

This invalidates several common assumptions:

- There is no single “correct” baseline

- Global pixel thresholds collapse under brand variance

- Page-level snapshots amplify noise instead of signal

Any scalable system must treat brand context as a first-class dimension, not as a configuration detail.

CI and infrastructure constraints

CI pipelines are optimized for determinism and throughput, not visual nuance.

The constraints are unavoidable:

- Headless rendering is resource-intensive

- Fonts, subpixel rounding, and GPU variance introduce noise

- Snapshot volume grows multiplicatively with themes and breakpoints

Visual regression systems that ignore these constraints tend to fail socially before they fail technically. Engineers stop trusting them long before they stop running.

Implementation - architecture before tooling

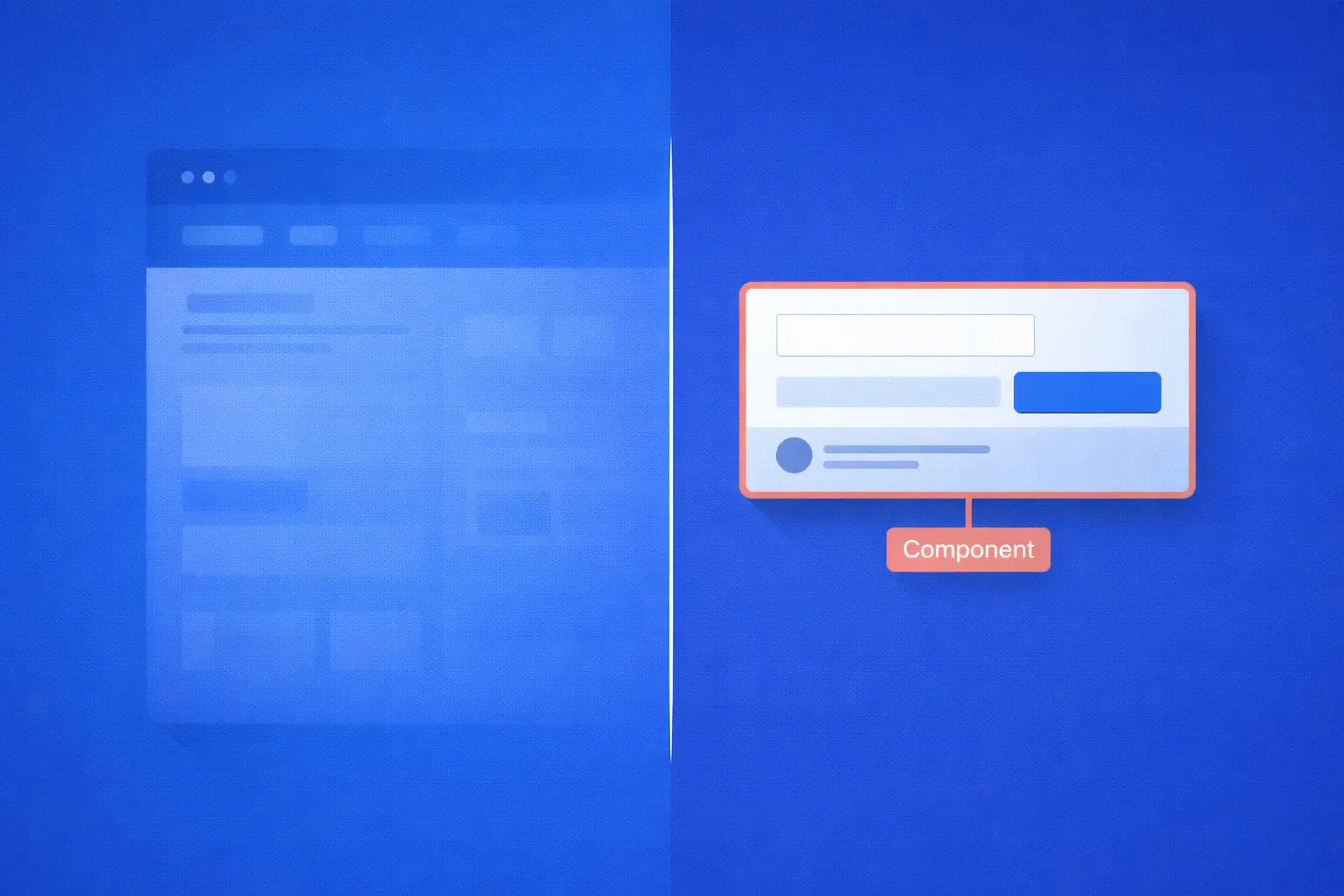

1. Snapshot scope: encode design intent

The most common failure mode is snapshotting entire pages.

Full-page screenshots maximize entropy: dynamic content, personalization, timestamps, and third-party assets all introduce irrelevant diffs. The result is visual noise without actionable signal.

Effective snapshots are scoped to design intent:

- Atomic and composite components

- Stable layout sections with clear ownership

- Explicit brand surfaces (logos, typography specimens, navigation)

Each snapshot must answer a single question:

“Has the visual contract of this element changed?”

If that question cannot be answered unambiguously, the snapshot is poorly designed.

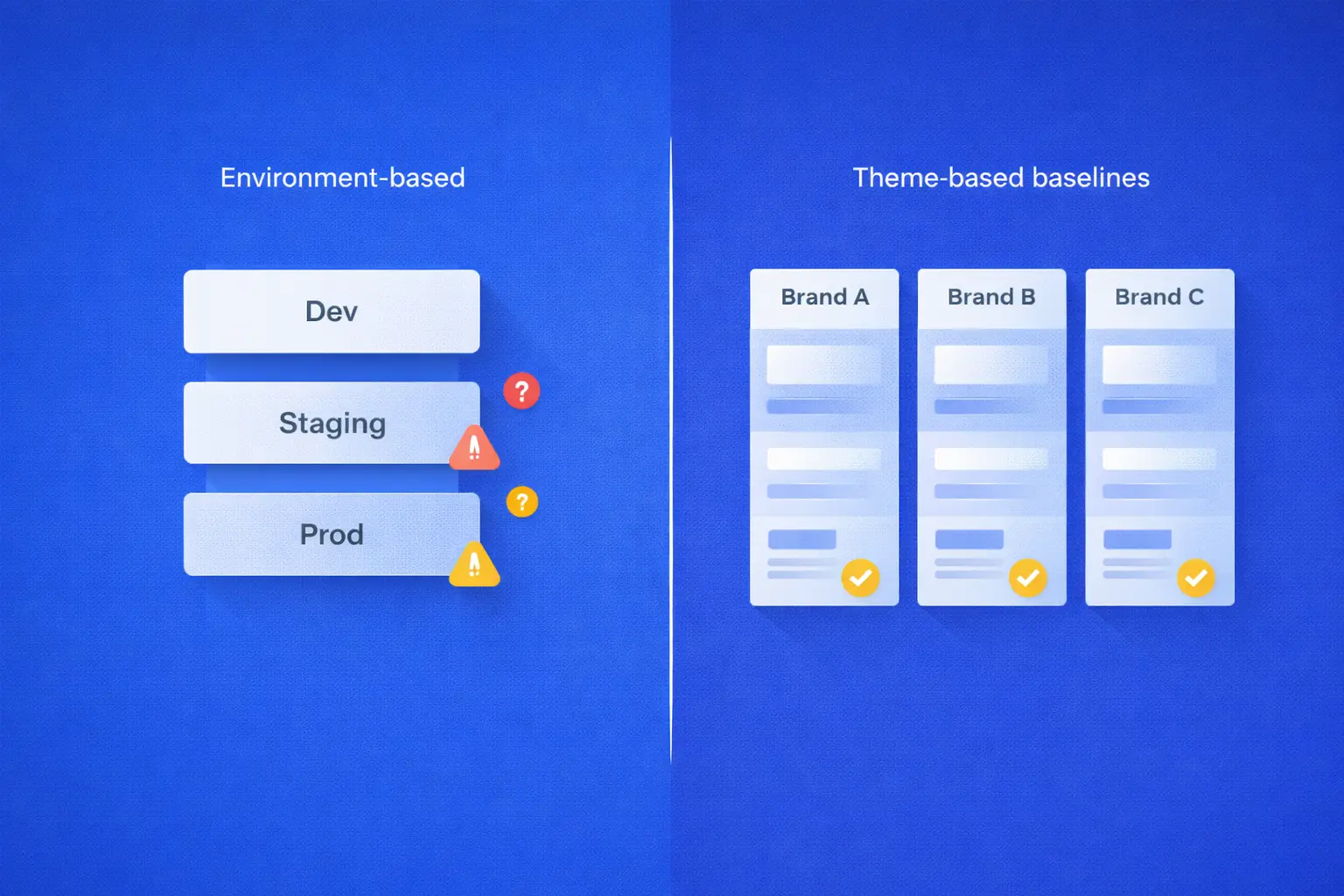

2. Per-theme baselines as a structural necessity

Baselines should represent visual truth, not deployment topology.

A scalable model includes:

- One baseline per brand or theme

- Environment-agnostic rendering inputs

- Baselines versioned alongside the component library

This enables:

- Intentional divergence between brands

- Consistent interpretation of diffs

- Auditable visual change history

Trade-off:

Per-theme baselines increase baseline volume and maintenance overhead. In practice, this cost is offset by a dramatic reduction in review fatigue and false positives—the primary reason visual systems lose trust.

3. Thresholding as a layered system, not a setting

Pixel-diff thresholds are necessary, but insufficient.

A single global tolerance either:

- hides meaningful regressions, or

- blocks harmless changes

A layered approach scales better:

- Strict or zero tolerance for brand-defining elements (logos, primary colors, typography scale)

- Low tolerance for layout shifts and bounding-box changes

- Higher tolerance for gradients, illustrations, and anti-aliasing artifacts

Thresholds must be component-specific, not global.

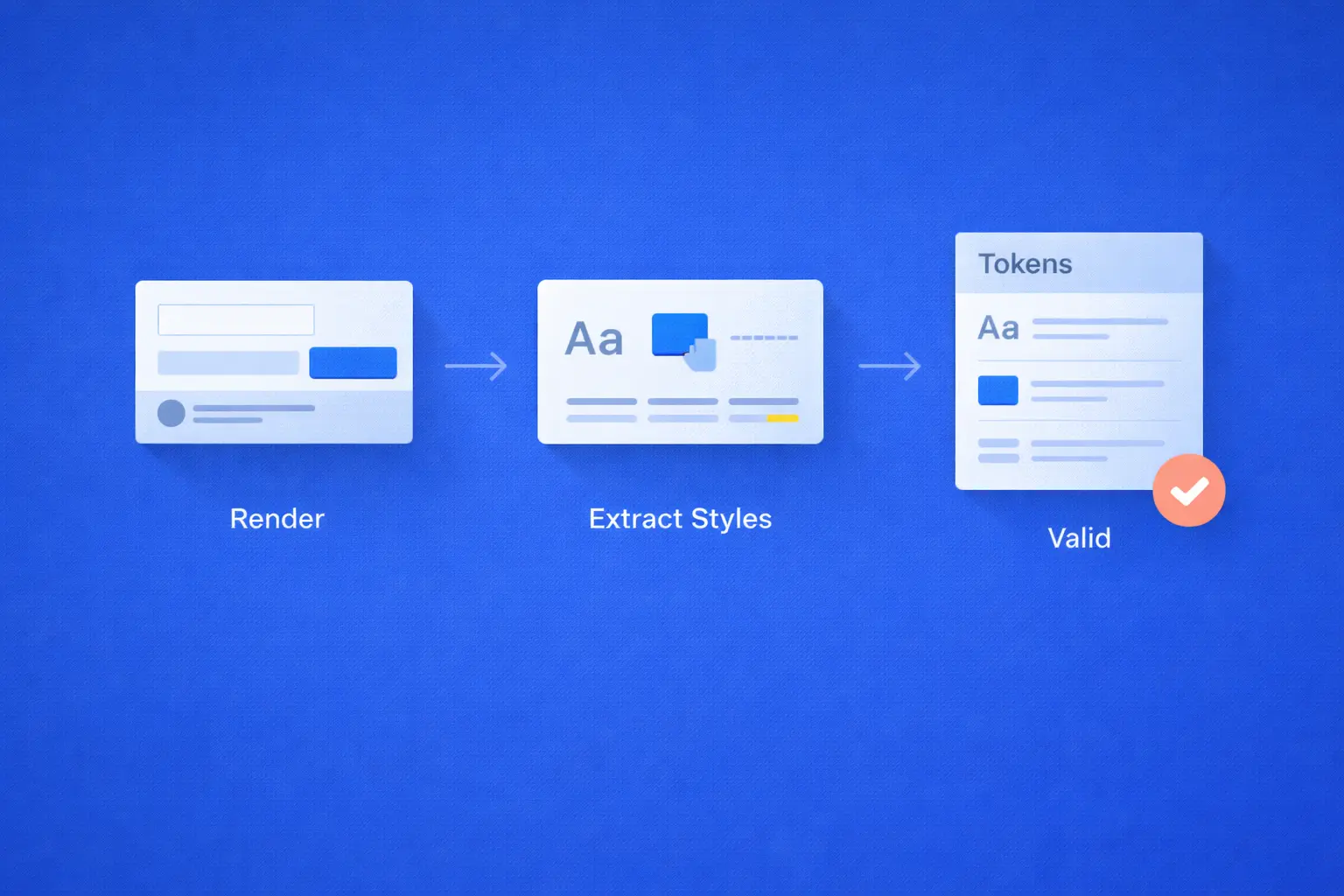

4. Semantic checks: enforcing visual invariants

Certain visual contracts should not rely on pixel inference.

For critical elements, extract a constrained set of computed styles at render time:

- font-family

- font-size and font-weight

- color values

- spacing tokens

These values are compared directly against expected design tokens for the active theme.

This produces two effects:

- Brand violations fail deterministically, independent of pixel noise

- Review conversations shift from “is this diff acceptable?” to “is this change intentional?”

Trade-off:

Semantic checks introduce coupling to token stability. When tokens evolve, failures are immediate and explicit. This is a feature, not a drawback.

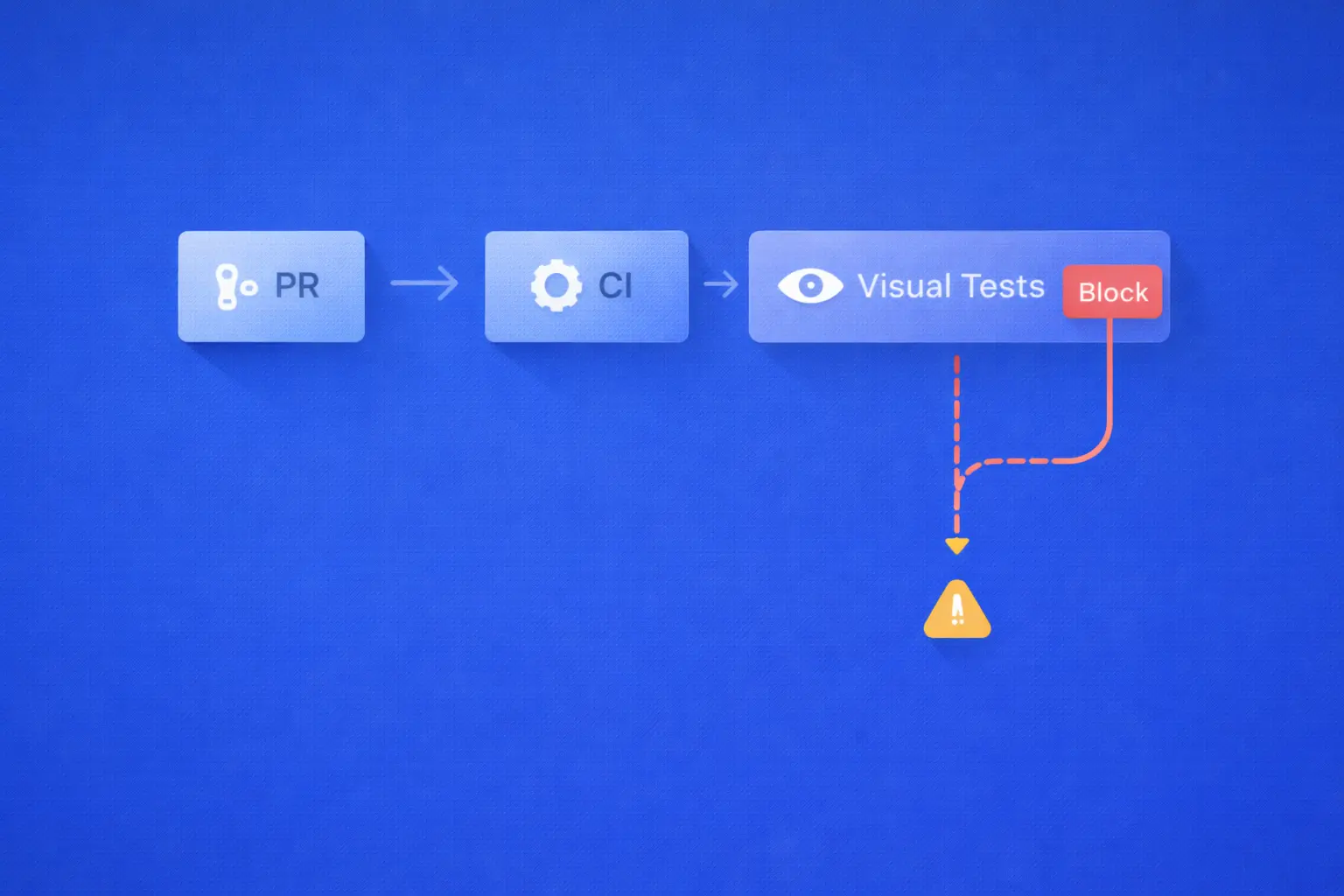

Pipeline integration - signal without indiscriminate gating

Visual regression testing should inform decisions, not blindly enforce them.

A resilient CI model:

- Visual tests run on every relevant change

- Results are published as structured artifacts (diffs with metadata)

- Gating is selective:

- Blocking for navigation, headers, checkout flows, and primary brand assets

- Non-blocking for secondary or content-driven surfaces

Each snapshot is explicitly classified at definition time.

This prevents the failure mode where every visual change becomes a red build—and engineers learn to ignore the system entirely.

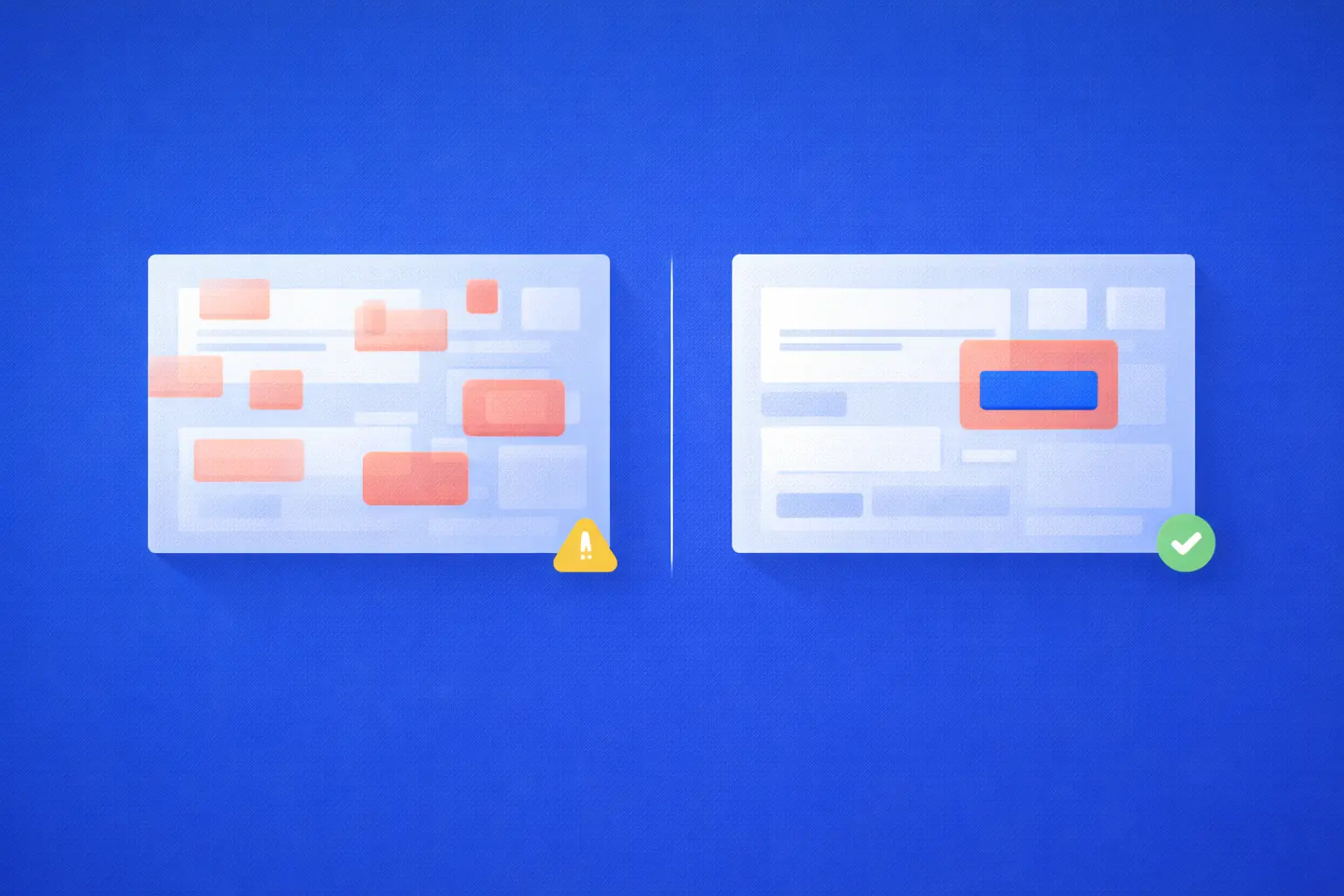

Testing and QA - triage is the system

Triage as a first-class workflow

A visual regression system succeeds or fails at triage.

Effective triage provides:

- Immediate context (before/after, highlighted diffs, component, brand)

- Clear ownership of accept/reject decisions

- Minimal friction for approving intentional changes

QA validates consistency. Design validates intent.

The system must support both without turning diffs into meetings.

False positives are design defects

False positives are not operational noise. They are system failures.

Common mitigations include:

- Controlled rendering environments (containerized browsers, fixed fonts)

- Locked viewport sizes and device scale factors

- Stubbed dynamic data

- Reduced motion and animation suppression

If a snapshot is routinely ignored, it should be refactored or removed.

Noise is not a user problem—it is a design flaw.

Performance and scale

Visual regression testing does not need to be fast everywhere—only where it matters.

Effective cost controls:

- Full visual suites on main branches and release candidates

- Targeted snapshots on feature branches

- Aggressive caching of browsers, fonts, and static assets

Attempting to run the entire visual suite on every commit rarely improves quality. Precision scales better than brute force.

Conclusion

A pragmatic rollout starts with a narrow, brand-critical surface, where baseline discipline and clear triage ownership are established early. Coverage is then expanded deliberately across themes, with snapshots periodically audited to ensure they continue to provide meaningful signal rather than accumulating noise.

Maintenance is an ongoing process. Obsolete snapshots must be removed, thresholds revisited as design systems evolve, and visual failures treated with the same rigor as functional ones.

At scale, pixel perfection is neither achievable nor desirable.

Predictability, not precision, is the only sustainable metric for visual integrity.

Jan 30, 2026