Contracts, Validation, and Silent Risk in Headless CMS Integrations

Headless CMS gives teams speed by splitting responsibilities: editors ship content, applications consume it. The failure mode is split as well — and that is the core risk. In headless systems, a breaking change can occur without a code deploy, without a build, and without an error. Everything can look “green” while the product silently degrades.

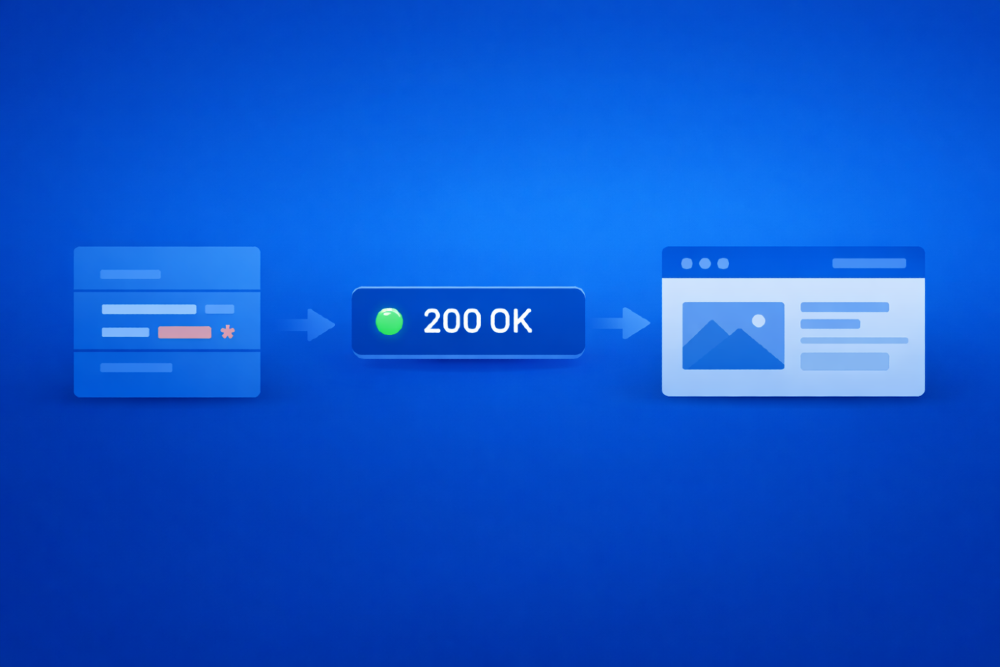

A common near-miss looks like this:

- A content model is adjusted in production (a required field becomes optional “for flexibility”).

- The CMS API continues to return 200 OK.

- The application renders a page without a key element (CTA, price, hero image).

- No alert fires because nothing technically crashed.

If headless content is treated as “just data,” this scenario will repeat. The only durable fix is to treat content models as product interfaces and enforce them like interfaces: with contracts, validation, and observability.

Failure modes that break headless quietly

Schema drift (the primary source of incidents)

A headless CMS is an API. Any schema change is a contract change, regardless of HTTP status.

Typical forms of drift include:

- required → optional (or full field removal)

- field renames

- type changes (

string → object,number → enum) - nested structure changes that alter access paths (

cost→cost.amount)

These changes rarely throw exceptions. Instead, they produce plausible but incorrect output that passes through the system unnoticed.

Diagnostic rule:

If the UI can still render something, you may never notice that it rendered the wrong thing.

Partially valid content (published but unusable)

CMS platforms allow publication of content that is structurally valid but product-invalid:

- empty localizations

- CTAs without URLs

- hero blocks without images

- broken references to linked entries or assets

This is not a UI defect. It is a data-quality failure.

Environment divergence (staging confidence, production reality)

Headless QA often tests the right code against the wrong data:

- staging schema differs from production

- preview API differs from delivery API

- features enabled in CMS but disabled in the application

- caches or CDNs serving stale content

When environments are assumed to be equivalent, silent failures become systemic.

Asynchronous breakage (delayed failures)

Headless delivery relies on asynchronous infrastructure:

- webhooks that fail without retry

- cache invalidation that does not propagate

- replication lag between content stores

- CDN propagation delays

These failures appear hours after a “successful” release and require monitoring rather than pre-release testing.

Baseline: the minimum that prevents most incidents

If no safeguards exist today, do not start by adding more UI tests. Start by enforcing interfaces.

This baseline prevents the majority of headless incidents with low operational cost:

- Define content contracts for critical surfaces

- Validate those contracts against real APIs

- Add synthetic production checks for user-visible outcomes

Everything else is layering, not foundation.

Contracts: content models as interfaces

A contract in a headless system is not documentation. It is an executable specification describing what consumers require in order to render correctly.

A correct contract separates:

- Structure - fields, types, nesting, nullability

- Invariants - business rules required for correctness (e.g. a CTA must have a URL)

Contracts must be:

- versioned

- reviewed

- enforced in CI/CD

A minimal contract example (conceptual):

Validation rules for campaignPage

- title - must exist and be non-empty

- heroImage.url - must exist

- primaryCTA.label - must exist

- primaryCTA.url - must exist

Unexpected properties may be rejected for strict control

Non-negotiable principle:

Schema change = release.

Accepting schema edits in production without release discipline is equivalent to accepting silent failures as normal operation.

Three-layer defense: prevent, detect, contain

Layer 1 - Contract tests (integration level)

Contract tests answer a single question:

Does the real API still satisfy the consumer’s expectations?

This is not frontend end-to-end testing. It is data interface verification.

Effective contract tests validate:

- field presence and requiredness

- data types

- nesting and shape

- allowed null states

They intentionally avoid:

- visual layout

- styling

- pixel-level rendering

Why this layer works:

It fails on the headless failure signature - “200 OK with the wrong shape.”

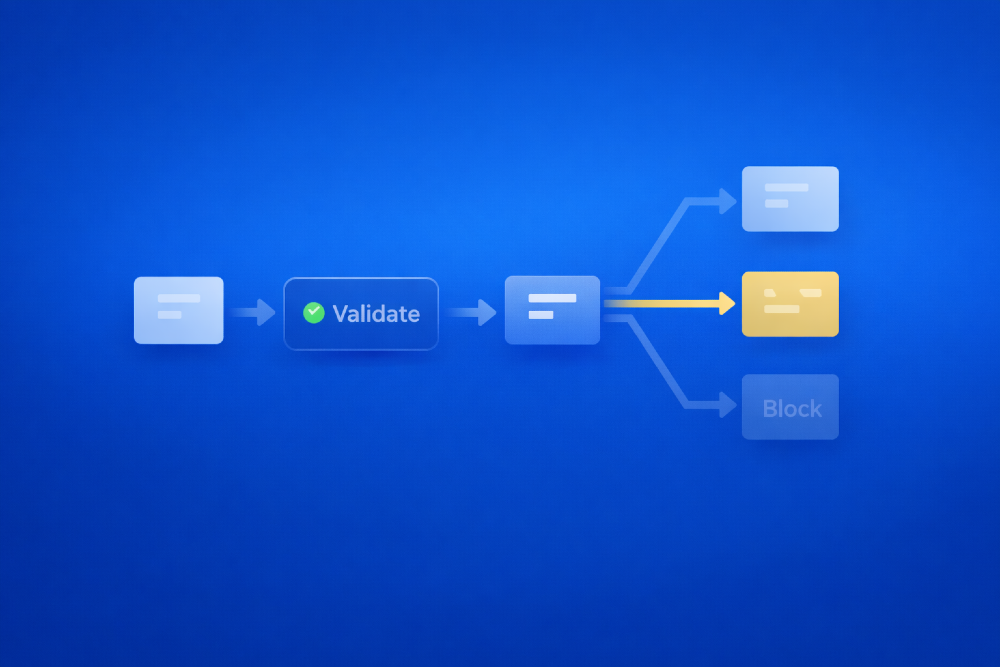

Layer 2 - Validation in the content delivery pipeline

Between fetching content and serving it to the application, validation must occur. This is where both structure and invariants are enforced.

Validation outcomes must be explicit:

valid- safe to servedegraded- safe with controlled fallbackinvalid- must be blocked, rolled back, or quarantined

Every validation run should emit structured events with sufficient diagnostic detail.

Critical decision: what happens on invalid?

Options (chosen per surface):

- reject publication

- block delivery

- serve last-known-good content

- render explicit fallbacks

If this decision is implicit, behavior will be inconsistent under pressure.

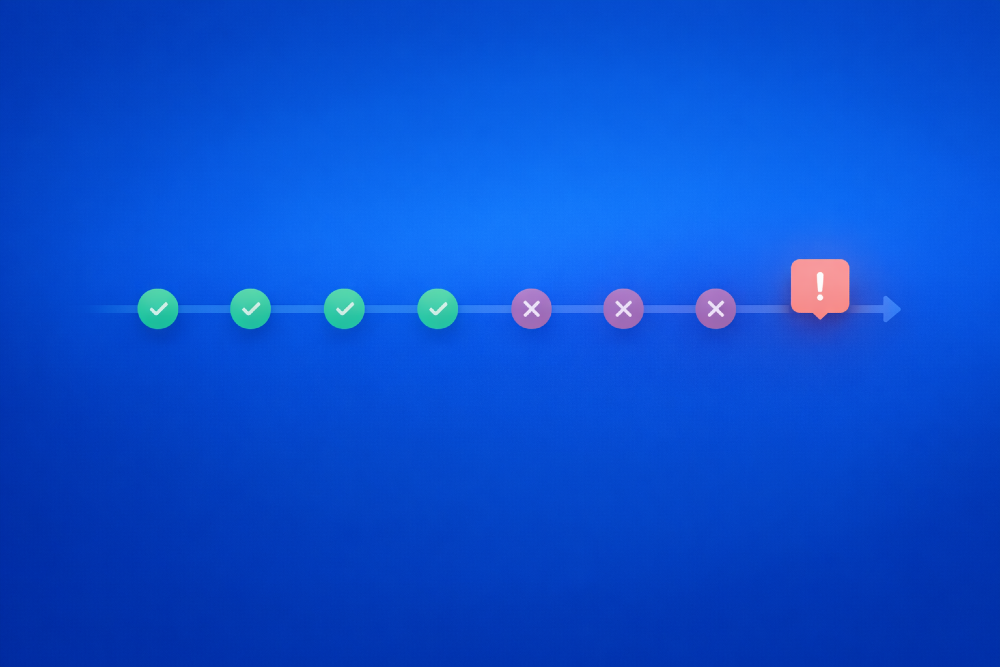

Layer 3 - Synthetic production checks

Because content changes without deployments, production requires continuous verification.

Synthetic checks should be:

- small

- fast

- outcome-oriented

Examples:

- campaign page contains a non-empty hero and CTA link

- product cards include price and image

- navigation includes required top-level items

Run frequently. Alert on patterns, not single failures.

Observability: QA without visibility is ineffective

Validation without visibility creates a false sense of safety.

Validation metrics and alerting

Track:

- invalid content rate (per model)

- degraded content rate

- most frequent missing fields

- invariant violation counts

- validation pipeline health

Example alerting strategies:

- invalid rate exceeds threshold → page on-call

- same field missing across many entries → notify team channel

- validation pipeline down → immediate escalation

A validation pipeline that fails silently is worse than no pipeline at all.

Schema drift monitoring

Schema itself must be observable:

- snapshot on change or on schedule

- diff against last known version

- alert on:

- removed required fields

- type changes

- structural renames

Schema drift is not inherently bad. Uncoordinated drift is.

Mitigation: assume failures will escape

Even with strong prevention, production failures will occur. Design to limit blast radius.

Defined content fallbacks

Fallbacks must be explicit and intentional:

- safe default values where meaning is preserved

- block suppression when data is invalid

- last-known-good content for critical surfaces

Fallbacks must be logged and measured. Silent degradation hides systemic issues.

Feature gates for content-dependent behavior

Content-driven changes require control:

- new optional fields

- new components

- new rendering paths

Feature gates allow rapid isolation of risk when schemas change unexpectedly.

Rollback plans for content and schema

Rollback in headless systems is operational, not version-control-based:

- revert schema changes

- restore prior content versions

- republish last-known-good state

- invalidate caches deterministically

If rollback is not rehearsed, it does not exist.

Ownership: where headless systems usually fail

Most headless incidents are not caused by editor mistakes. They are caused by unclear ownership.

Effective systems assign responsibility explicitly:

- QA owns data-quality signals and contract enforcement outcomes

- Backend / Platform owns schema as an interface and validation infrastructure

- Product Management owns risk: criticality, degradation rules, gates, and rollback decisions

If PM is excluded from contract decisions, teams will either over-constrain content or ship silent failures. There is no stable middle ground.

Conclusion

Headless architecture does not eliminate risk; it shifts it from code into data and from controlled releases into everyday operations. Failures become quieter rather than rarer, which makes them harder to detect and easier to normalize.

A resilient QA strategy in headless systems is built on strict interface discipline. Content models must be treated as contracts, validated at the moment of delivery, and continuously verified in production based on user-visible outcomes. Degradation and failure should be explicitly instrumented, not inferred after the fact, while fallback and rollback paths must be designed deliberately rather than improvised under pressure. Clear ownership across QA, platform, and product roles is essential to prevent silent responsibility gaps.

Silence is not stability.

In headless systems, silence is often the first symptom of a deeper failure.

Jan 30, 2026