Fuzzing is an automated software testing technique that floods an application with unexpected, malformed, or semi-random inputs to expose crashes, validation gaps, and security vulnerabilities that manual testing routinely misses.

In web applications specifically, a fuzzer replays crafted HTTP requests against endpoints, parameters, and headers until something breaks, then captures the evidence so engineers can act on it.

The expected outcomes are concrete: a safer, well-scoped campaign that produces measurable results (5xx spikes, auth bypasses, logic errors), a faster triage loop, and a bug report format your engineering team can act on without back-and-forth.

Whether you are working internally or engaging a web application development firm to conduct it alongside a build engagement, the same discipline applies.

Scope, Safety Limits, and Success Metrics

Before sending a single fuzzed request, write down exactly what you are testing and what you are not. Choose targets by asking which endpoints accept rich user-controlled input search filters, file imports, user-profile fields, and payment flows.

Narrow to the features and roles most likely to conceal logic errors: guest checkout, admin bulk operations, and cross-tenant API calls.

Set non-negotiable safety boundaries before you start. Run exclusively against a dedicated test environment, never production. Cap requests at a sensible rate, 50 to 100 RPS is a reasonable ceiling for most staging stacks.

Set per-campaign concurrency limits (4–8 workers), a hard timeout per request (5 seconds), and a stop condition: if error rate exceeds 20% or the target becomes unresponsive, halt and investigate before continuing.

Define success in concrete, observable terms before you begin. You are looking for HTTP 5xx responses, information disclosures in error bodies, authentication or permission bypasses, invalid application state (records created without required fields, balances that go negative), and abnormal latency spikes that suggest resource exhaustion or infinite loops.

⚠️ One-Line Warning: Running a fuzzing campaign without pre-defined success criteria produces a flood of noise and wastes everyone's time define your signals first, then run.

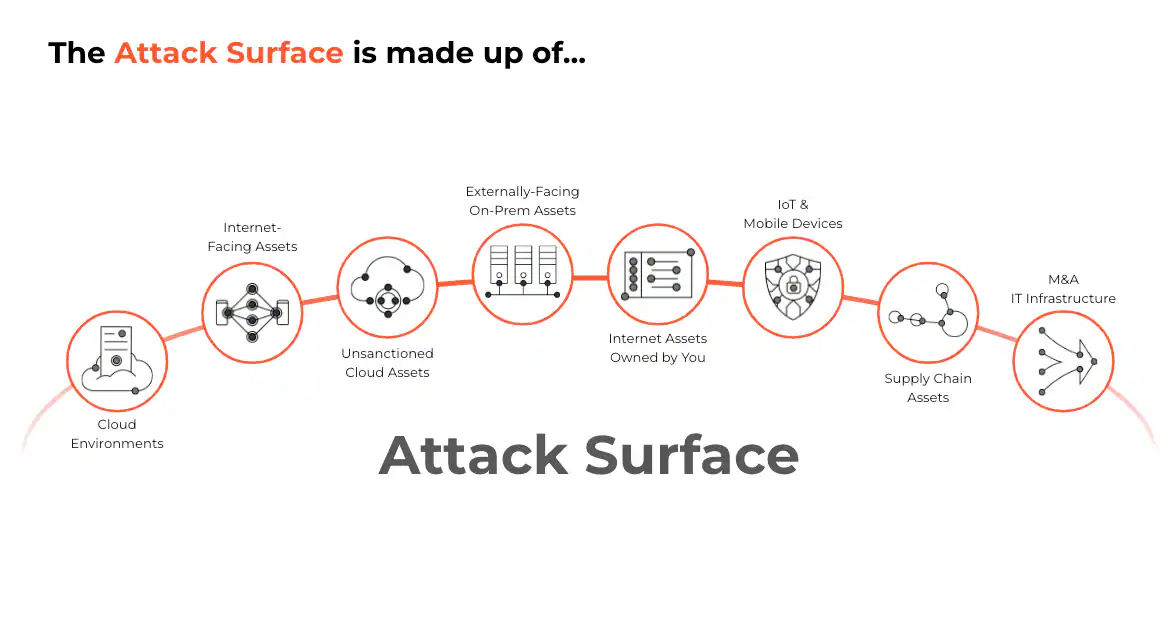

Web Attack Surface: What to Fuzz

Web applications expose more fuzzable surface than most engineers realize. The obvious targets are query parameters, JSON and form bodies, custom headers, cookies, file upload fields, and serialization formats (XML, protobuf, MessagePack). Each of these can be independently mutated and each tends to be validated in a different layer of the stack.

State and auth surfaces deserve separate treatment: session tokens, CSRF tokens, OAuth refresh tokens, role-based access controls, and multi-step workflow state are all worth fuzzing in their own right. They often live at the intersection of business logic and security logic, which makes them especially fragile.

Stateful Fuzzing: Sessions, Tokens, and Workflows

Most interesting bugs in web applications live inside authenticated, multi-step workflows not on the login page. Stateful fuzzing means maintaining a live session across a chain of requests: authenticate, receive a token, use that token to perform an action, then confirm or cancel.

A concrete example:POST /auth/login → POST /orders → POST /orders/:id/confirm. Each step must succeed before the next can be fuzzed meaningfully.

Implement live session handling from day one. Use a cookie jar that persists across requests, refresh short-lived tokens automatically, and re-extract CSRF tokens from each response before replaying the next step.

The most common failure mode in stateful fuzzing campaigns is stale auth: the session expires mid-campaign, every subsequent request gets a 401 or 403, and the fuzzer logs thousands of "errors" that are actually just authentication failures zero real coverage.

Fuzzing Strategies That Work: Mutation vs Generation

Mutation fuzzing starts with a valid, captured request and systematically modifies it flipping bytes, replacing field values, injecting boundary values into existing positions. It is fast to set up and well-suited to formats where valid structure is already known.

The tradeoff is that heavily mutated inputs quickly become syntactically invalid and get rejected at the parser layer before reaching any interesting code path.

Generation fuzzing builds inputs from a schema or grammar: you describe the structure and the fuzzer produces structurally valid but semantically surprising inputs. It reaches deeper code paths because the application does not reject the outer shape of the request, but it requires more upfront investment to define the grammar or schema.

Structure-aware fuzzing is the middle ground you should default to: produce inputs that are partially valid correct outer structure, surprising inner values. A JSON body with correct field names but a 10,000-element nested array reaches far more validation logic than a random byte sequence does.

Stateful fuzzing is not an advanced option it is mandatory for any campaign targeting business workflows. Treat it as a baseline requirement, not a stretch goal.

| Approach | Best When | Tradeoff |

|---|---|---|

| Mutation | Rapid exploration of known surfaces | Shallow coverage; parser rejects invalid shapes early |

| Generation | Well-defined protocols, deep code coverage needed | High setup cost; requires schema or grammar |

| Structure-aware | JSON/XML APIs, most web endpoints | Moderate setup; best depth-to-effort ratio |

| Stateful | Authenticated workflows, business logic | Complex orchestration; mandatory, not optional |

Payload Design Checklist: High-Signal Inputs Only

A good payload list is curated, not exhaustive. Throwing every known injection string at every parameter produces noise. The following checklist covers the categories that most reliably surface real issues in web applications keep each category focused, and resist the urge to import a 50,000-line generic wordlist without filtering it first.

- Boundary values & sizing: Send zero, negative numbers, max integer (2³¹−1, 2⁶³−1), and empty strings; surfaces missing validation and overflow bugs.

- Type confusion: Pass a string where a number is expected, an array for an object field, an integer for a boolean; surfaces parser inconsistencies and type-coercion logic errors.

- Nesting / recursion depth: Send deeply nested JSON or XML (50–100 levels); triggers stack overflows and quadratic parser behavior.

- Encoding & Unicode normalization: Use percent-encoded, double-encoded, and Unicode-normalized variants; surfaces canonicalization and validation bypass bugs.

- Protocol / header variations: Duplicate Accept, Content-Type conflicts, unexpected HTTP methods; surfaces routing and middleware inconsistencies.

- Schema violations: Omit required fields, add undeclared fields, include fields from a different endpoint; surfaces missing validation and mass-assignment vulnerabilities.

- Null / empty / optional edge cases: null JSON values, empty arrays, missing optional keys; surfaces unguarded null-dereferences and default-value assumptions.

- Date / time edge cases: Epoch zero, year 9999, timezone shifts, ISO vs non-ISO formats; surfaces parsing inconsistencies and off-by-one schedule logic.

- ID / reference permutations, Neighbor IDs, IDs belonging to another tenant or role, negative IDs, UUID with wrong version; surfaces IDOR and broken object-level authorization.

- State transitions (out-of-order): Repeat a completed step, skip a required step, replay a one-time token; surfaces business logic bypasses in checkout, approval, and onboarding flows.

Boundary Testing and Type Confusion

Integer boundary testing is the fastest way to find validation gaps and arithmetic logic errors. Send the minimum and maximum values for each relevant integer type (0, −1, 2,147,483,647, 2,147,483,648), plus very large strings (10 KB, 1 MB) for any text field.

Deep nesting a JSON object with 100 layers of recursion, or an array containing 100,000 elements exposes quadratic behavior in parsers and validators. Empty arrays and empty objects are worth including separately because application code frequently handles them differently from null and from a missing key.

Unexpected type substitution is surprisingly effective. Sending a JSON array where the API expects a string ("name": ["admin"]) surfaces coercion assumptions in ORMs and validation libraries. Sending an object where a number is expected ("price": {"$gt": 0}) is a classic NoSQL injection vector.

Where the target stack uses JavaScript or Python both of which do extensive implicit type coercion NaN and Infinity are worth adding to integer-targeting payloads because they pass numeric type checks while breaking arithmetic comparisons downstream.

Encoding and Unicode Edge Cases

Encoding attacks exploit the gap between how an application validates input and how it ultimately uses that input. Percent-encoding (%2F for /) is the most common vector; double-encoding (%252F) works when the application decodes once before validation and again before use.

Mixed encodings UTF-8 and Latin-1 in the same string cause comparison failures in languages that do not normalize before comparing. Malformed byte sequences expose fragile UTF-8 decoders that crash or silently drop characters.

HTTP and Protocol Variations

HTTP-level fuzzing targets the protocol layer rather than application logic. Duplicate headers sending two Content-Type values or two Authorization headers in the same request exposes inconsistent parsing between a reverse proxy and the application server, which can be exploited to smuggle payloads past WAF rules.

Conflicting Content-Type declarations (header says application/json, body is form-encoded) surface assumptions in middleware routing logic. Unexpected HTTP methods (TRACE, CONNECT, or custom verbs) reach code paths that are never tested in normal flows.

Tooling and Observability: Measure Everything

The minimum viable tooling stack has three components: a proxy (Burp Suite or OWASP ZAP) to capture clean seed requests from real user flows, a fuzzer or request generator (ffuf, wfuzz, Burp Intruder, or a custom harness) to drive mutations, and a replay harness that can re-send any captured request with modifications and record the full response.

The OWASP Web Security Testing Guide covers both ZAP-based and ffuf-based approaches in detail.

The signals you collect determine the quality of your findings. At minimum, record HTTP status code, response time, response body length, any error message content, and any exception or stack trace that surfaces in the body.

On the server side, correlate every fuzzed request to application logs and distributed traces using a unique X-Fuzz-Request-ID header that the application logs verbatim. Without this correlation, you will spend more time re-creating requests during triage than running the campaign itself.

Campaign Execution and Triage Workflow

Run a warm-up phase first: send 20–30 valid, unmodified seed requests and verify that all return the expected 2xx status codes and response shapes. This confirms the test environment is healthy before you send anything unusual. Only then begin the mutation phase, ramping up gradually rather than flooding at full capacity from the start.

Corpus management matters as much as the mutations themselves. Capture clean seed requests that exercise distinct code paths one for each endpoint variant, one per role, one per major feature.

Minimize your seeds before mutating: a shorter, cleaner seed request makes mutations more targeted and reduces false positives from unrelated fields. Avoid mutating seeds that already contain errors or edge-case values, as they will contaminate your mutation distribution.

Bug Report Template

| Field | What to Include |

|---|---|

| Minimal Request | The shortest HTTP request that reproduces the issue, with all non-essential fields removed. |

| Repro Steps | Step-by-step from a clean environment: auth state, prerequisite data, exact request sequence. |

| Response | Full HTTP response including status, headers, and body (truncate if > 10 KB). |

| Correlation ID | X-Fuzz-Request-ID value + link to log/trace entry in observability tooling |

| Logs / Traces | Server-side stack trace, relevant log lines, or distributed trace span IDs |

| Impact | What an attacker can achieve: data exposure, auth bypass, DoS, state corruption be specific. |

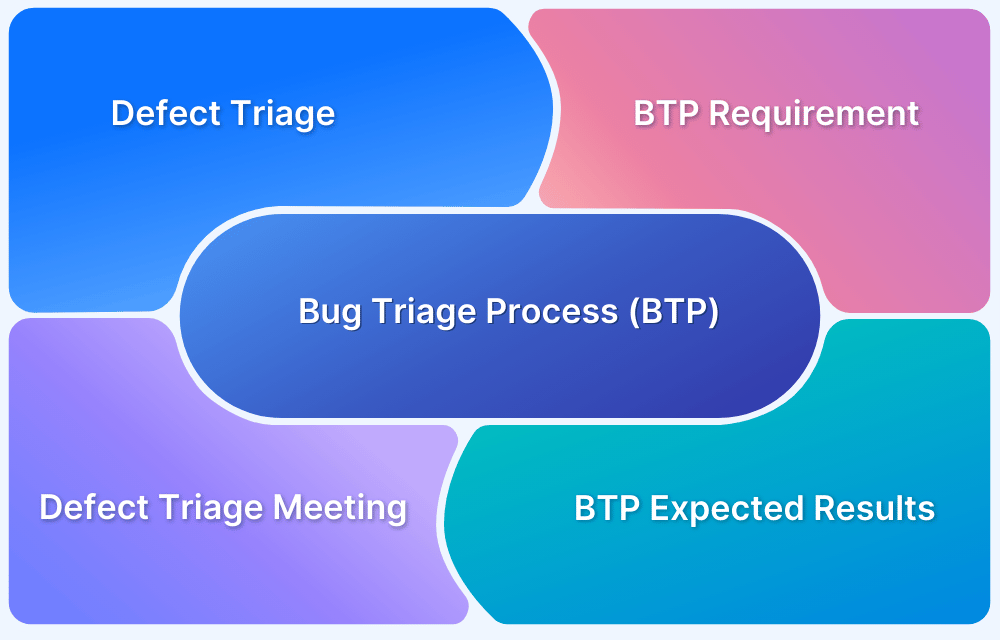

Deduplication to Repro to Minimal Test Case

Every confirmed finding goes through the same four-step process without shortcuts:

- Group similar findings - Cluster by status code + error pattern + affected endpoint. One cluster = one investigation, regardless of how many distinct payloads triggered it.

- Reproduce manually - Re-send the trigger request from a clean session in your proxy. If it reproduces, confirm the finding is stable and not an environmental fluke.

- Minimize the payload - Systematically remove fields, shorten strings, and simplify structures until you have the smallest input that still triggers the behavior. Smaller reproducers are faster to fix and easier to test against once patched.

- Assess impact - Separate the symptom (a 500 error) from the actual impact (an unauthenticated user can read another user's records). Security severity lives in the impact, not the error code.

A finding is "done" when it has a stable, manual reproduction, the smallest possible triggering input, and a clear severity rationale that distinguishes between the observable error and the security or data-integrity consequence.

Conclusion

The full fuzzing workflow follows a disciplined sequence: define your scope and safety limits, map the attack surface, model the stateful workflows for each user role, choose the right mutation or generation strategy, design a curated payload checklist, instrument the application for observability, then execute with a runbook and triage the results into minimal, reproducible bugs.

Safety and success metrics are what separate a productive campaign from a noisy one. A campaign without pre-defined stop conditions, RPS caps, and success signals will generate thousands of findings of dubious value and exhaust the team reviewing them.

A campaign with those guardrails in place produces a handful of high-confidence, high-impact bugs that engineering teams can act on immediately.

The practical next step: pick one workflow in your application preferably an authenticated multi-step flow with financial or data-sensitivity implications instrument it with request-ID logging, snapshot your test database, and run a small, bounded campaign against it this week. One focused campaign on a real feature will teach you more about your application's resilience than any amount of theoretical planning.

Feb 20, 2026