Modern interfaces are no longer defined by static layouts alone. Motion - transitions, micro-interactions, and animated state changes - has become a primary carrier of intent. It communicates causality, reinforces hierarchy, and reduces cognitive load when executed correctly.

Despite this, motion remains one of the least tested surfaces in frontend systems.

Animations are often treated as visual polish: reviewed briefly, merged quickly, and revisited only when something breaks visibly. This approach does not scale. As products grow more complex, motion becomes tightly coupled to state logic and increasingly responsible for subtle yet costly regressions.

Motion is not decoration.

It is behavior unfolding over time.

This article explains why motion requires automated testing, where motion systems actually fail in production, and which techniques reliably reduce regressions without turning CI into a liability.

Why Motion Regressions Are Expensive - and Hard to Detect

Motion failures rarely present as obvious defects. They do not usually crash applications or break layouts. Instead, they erode clarity.

Common regressions include:

- Transitions triggering too early or too late

- Easing curves changed unintentionally

- Missing intermediate states

- Interrupted animations after refactors

- Reduced-motion fallbacks silently broken

- Visual feedback desynchronized from state updates

These issues pass unit tests. They pass integration tests. They often pass visual regression checks that rely on static screenshots.

From the user’s perspective, the interface becomes unreliable:

- “Did that action register?”

- “Is the interface responsive?”

- “Why does this feel slower than before?”

From an engineering perspective, motion regressions are expensive because they are discovered late, debated subjectively, and difficult to reproduce consistently.

Automation exists to reduce exactly this class of risk.

Why Traditional Testing Misses Motion

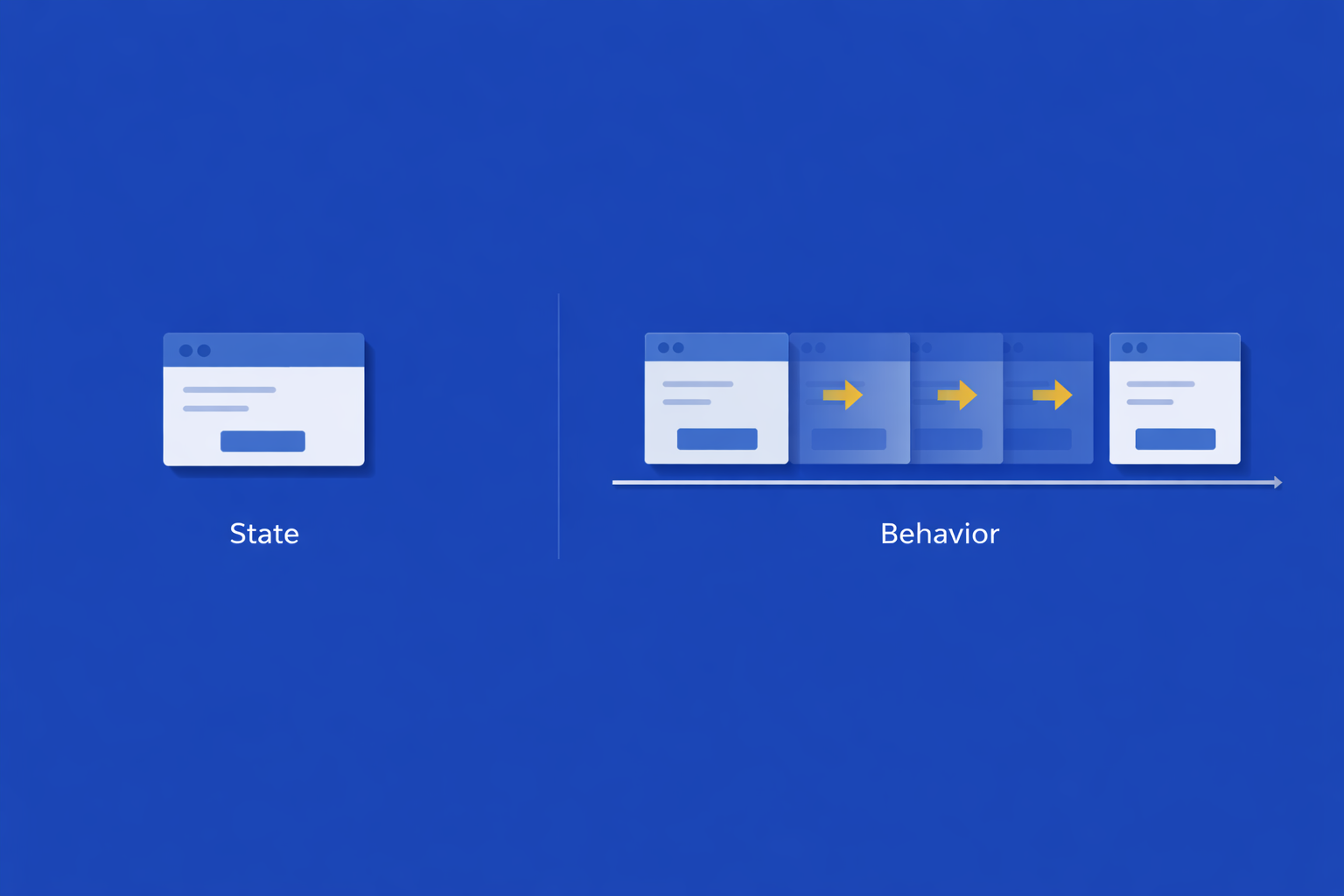

Most frontend test strategies operate on state, not time.

- Unit tests validate logic in isolation.

- Integration tests assert DOM structure or component state.

- Visual regression tests compare static snapshots.

Motion, however, is behavior unfolding over time. It is neither a single state nor a single frame.

A DOM assertion can confirm that an element exists, but not when it appeared.

A screenshot can confirm a final layout, but not how it arrived there.

As motion complexity increases - shared timing tokens, orchestrated transitions, design-system abstractions - the gap widens. Reduced-motion variants widen it further, as they are rarely exercised by default.

The result is predictable: motion becomes an untested dependency.

How Motion Actually Fails in Production

Across real systems, motion regressions tend to cluster into three failure modes.

Timing Failures

Timing failures occur when animations technically run but no longer align with user expectations.

A transition completes instantly instead of easing in. Visual feedback arrives after state has already changed. Loading indicators appear too late to reassure the user.

These failures typically originate from:

- CSS specificity conflicts

- Refactors that override shared timing tokens

- Conditional logic that bypasses animation paths

Nothing is broken in state. The failure exists entirely in the temporal dimension.

Coordination Failures

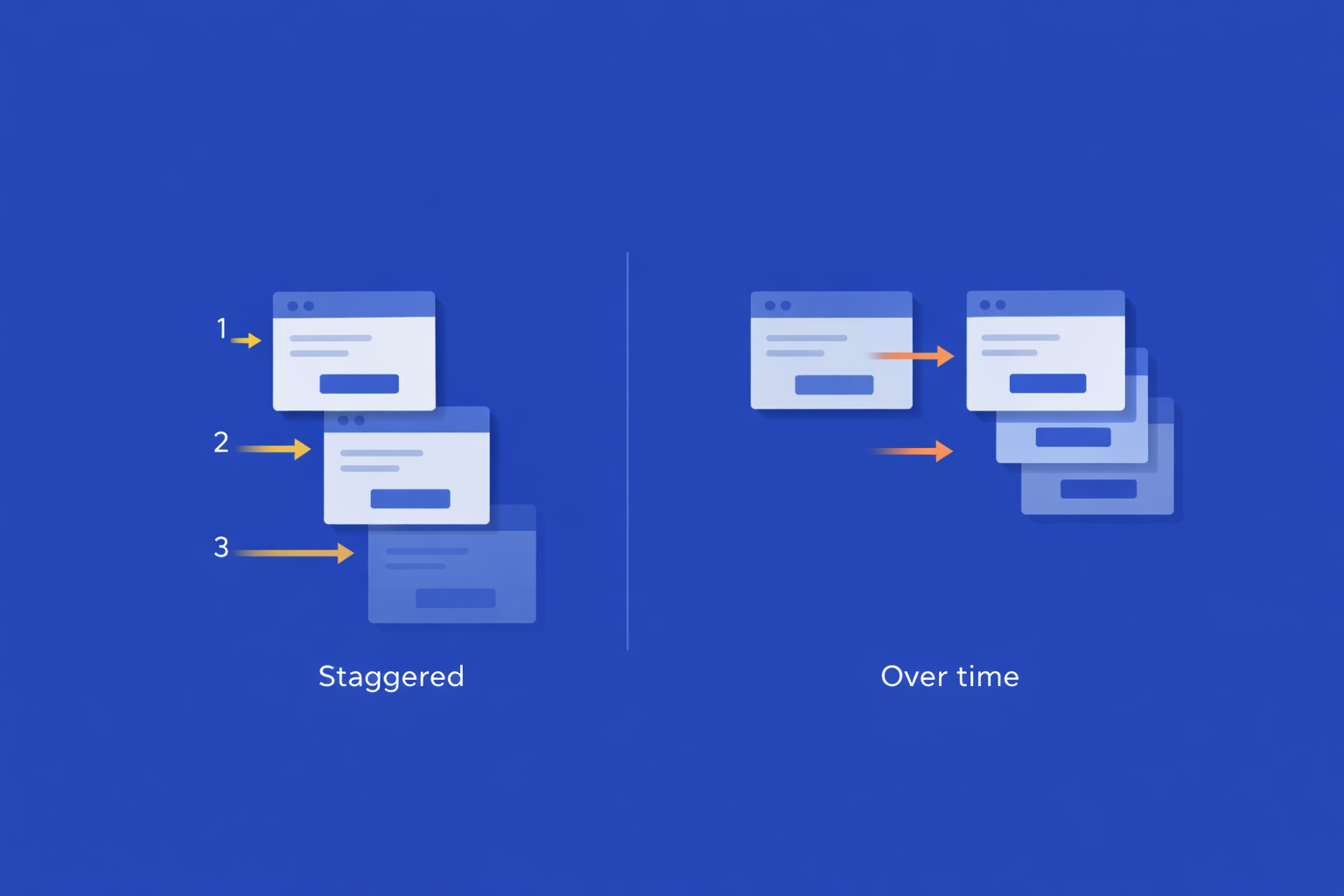

Coordination failures occur when multiple animated elements lose their intended sequencing.

Examples include:

- Staggered lists collapsing into simultaneous entry

- Overlapping transitions triggered by shared state updates

- Orchestrated animations desynchronizing after refactors

In information-dense interfaces, these regressions increase cognitive load. Users lose the visual order motion was meant to establish.

Behavioral Failures

Behavioral failures violate the user’s mental model of interaction.

Examples include:

- Animations responding to the wrong input axis

- Scroll-linked motion bound to incorrect properties

- Hover or focus animations triggering during unrelated interactions

These issues are difficult to detect because functional tests only verify that interactions are possible - not that motion responds correctly under interaction.

Approaches That Work (and Their Limits)

There is no single technique that fully solves motion testing. Teams that succeed combine complementary approaches.

test('respects reduced motion', async () => {

await page.emulateMediaFeatures([

{ name: 'prefers-reduced-motion', value: 'reduce' }

]);

await page.click('[data-testid="open-drawer"]');

const drawer = page.locator('[data-testid="drawer"]');

await expect(drawer).toBeVisible({ timeout: 100 });

const transition = await drawer.evaluate(

el => getComputedStyle(el).transition

);

expect(transition).toBe('none');

});These tests do not validate aesthetics.

They validate intent.

Frame Sampling and Motion Metrics

A more analytical approach inspects motion signals rather than pixels:

- Opacity and transform values

- Bounding box positions

- Start and completion timestamps

Assertions are expressed as constraints, not exact values.

A typical motion contract enforces boundaries such as:

- Visual feedback appears ≤ 100 ms after user action

- Opacity reaches ≥ 0.95 within an expected window

- Translation progresses monotonically along the primary axis

- Final state stabilizes before interaction resumes

Most teams avoid per-frame comparison. A common strategy is sparse sampling: five to seven frames captured at deterministic timestamps across the animation duration. This detects structural regressions while avoiding GPU timing noise.

Visual Diffing Across Time

Visual regression systems integrate well with CI and review workflows. They are effective at detecting unintended visual changes in components and page-level transitions.

However, visual diffing is inherently frame-based. If an animation’s start and end states remain unchanged, regressions that occur mid-transition often go unnoticed.

Visual diffing should be treated as a detection layer - not a complete solution.

Making Motion Deterministic

Most motion-related flakiness originates from non-deterministic timing.

A common anti-pattern is replacing requestAnimationFrame with setTimeout. This appears to work in isolated tests but does not reflect real browser scheduling behavior.

A more reliable approach is explicit frame control.

class RAFController {

constructor() {

this.timestamp = 0;

this.queue = [];

this.id = 0;

this.originalRAF = window.requestAnimationFrame;

window.requestAnimationFrame = this.raf.bind(this);

}

raf(callback) {

const id = ++this.id;

this.queue.push({ id, callback });

return id;

}

step(frames = 1, delta = 16.67) {

for (let i = 0; i < frames; i++) {

this.timestamp += delta;

const callbacks = this.queue.splice(0);

callbacks.forEach(({ callback }) => callback(this.timestamp));

}

}

restore() {

window.requestAnimationFrame = this.originalRAF;

}

}This allows tests to advance animation state deterministically and assert intermediate conditions without relying on real-time delays.

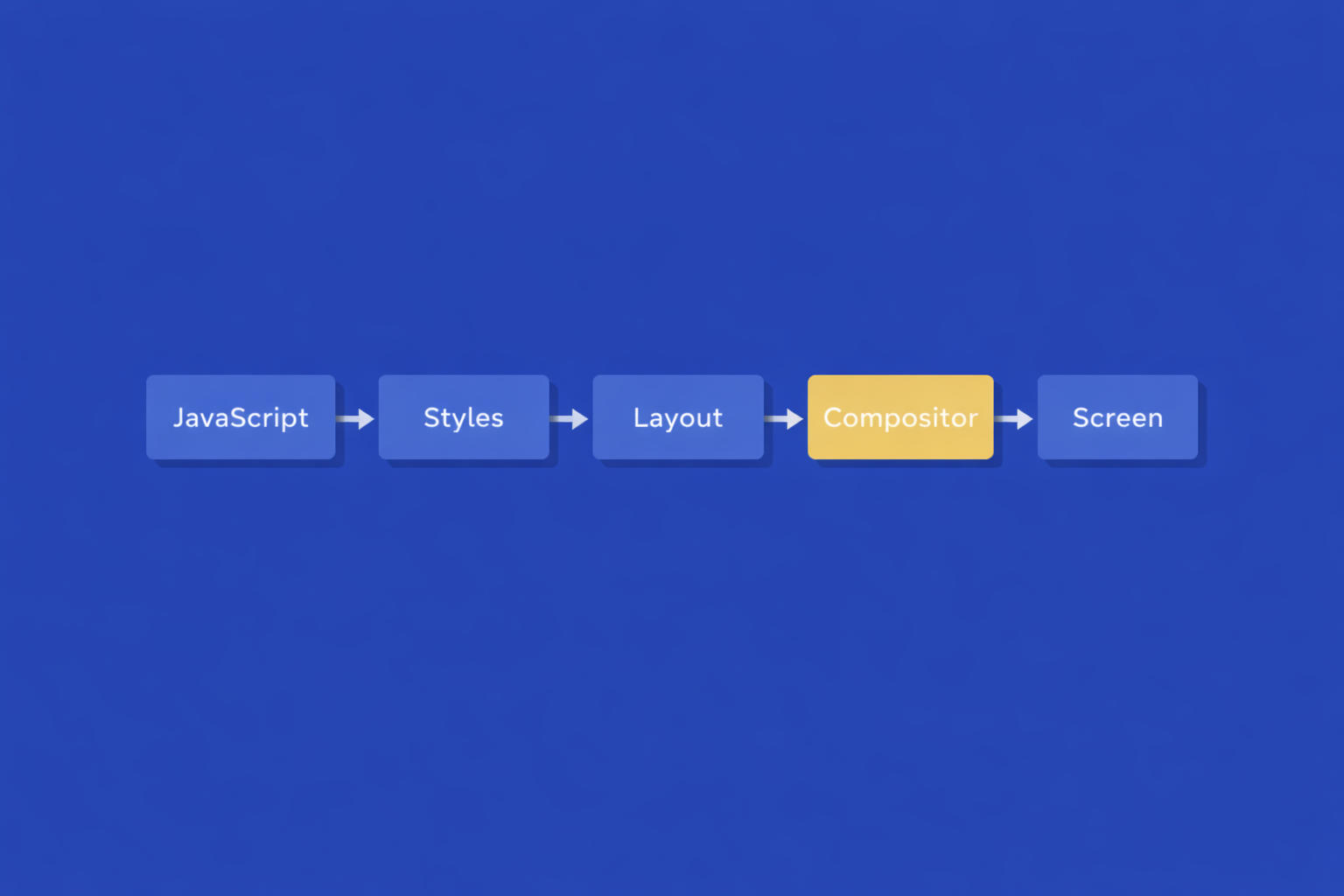

CSS Animations and the Compositor

CSS-driven motion introduces additional complexity. GPU-accelerated transforms may not be immediately reflected in computed styles.

When inspecting CSS animations:

- Prefer the Web Animations API when possible

- Use

getAnimations()to inspect animation state - Allow a frame for compositor flush before assertions

const animation = element.getAnimations()[0];

animation.currentTime = 150;

await new Promise(r => requestAnimationFrame(r));

const transform = getComputedStyle(element).transform;

Selective Testing by Criticality

Not all animations carry equal risk.

A practical categorization:

- Tier 1 - Primary user flows and state-signaling animations

- Tier 2 - Navigation and layout transitions

- Tier 3 - Decorative micro-interactions

Tier 1 runs on every pull request.

Tier 2 runs on scheduled pipelines.

Tier 3 relies on behavioral assertions only.

This keeps signal high and CI cost predictable.

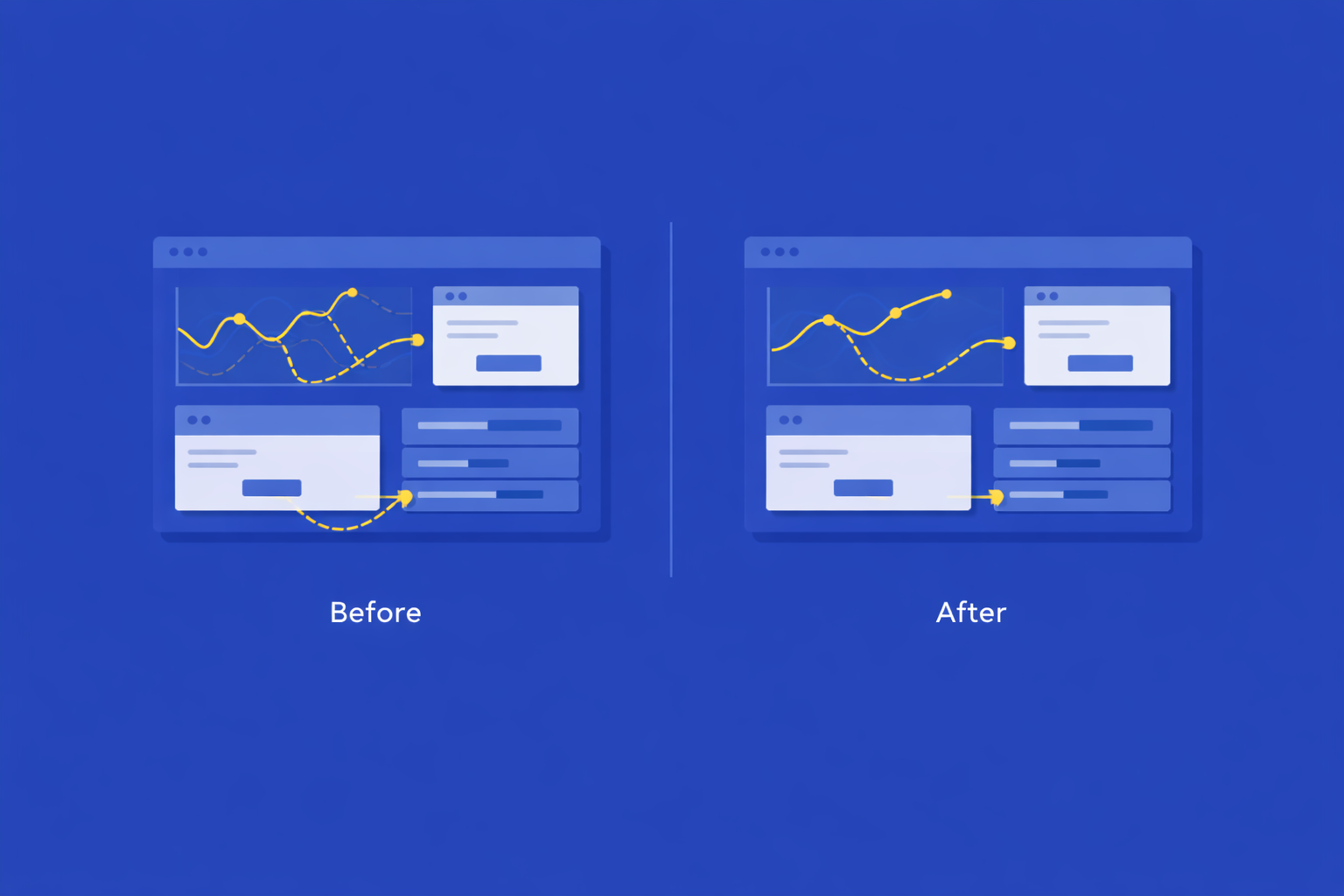

Case Study: Stabilizing a Motion-Heavy Dashboard

A data-intensive dashboard experienced recurring reports of “glitchy” behavior that were difficult to reproduce consistently.

Findings

- Chart animations relied on

setInterval, causing frame drops under load - Animation states were not canceled before starting new ones

- Timing logic was split across multiple layers

Intervention

Critical animations were migrated to the Web Animations API to enable time-based inspection.

const animation = element.animate(

[{ transform: 'translateY(0)' }, { transform: `translateY(${offset}px)` }],

{ duration: 300, easing: 'ease-out', fill: 'forwards' }

);

animation.currentTime = 150;A tiered testing strategy combined frame sampling, keyframe validation, and behavioral assertions.

Outcome

Motion regressions stopped appearing as a recurring incident category. Detection shifted from post-release to pull-request review. After initial tuning, maintenance cost stabilized.

Design iteration accelerated because regressions surfaced immediately and objectively.

Common Mistakes

- Using end-state assertions as a proxy for motion correctness

- Testing animations in isolation without real layout constraints

- Ignoring performance variability across devices

- Failing to test interrupted or overlapping animations

These issues account for the majority of motion regressions observed in production systems.

Conclusion

Motion testing is not about visual perfection. It is about preserving behavioral intent under change.

When motion is treated as code - observable, testable, and reviewable - it stops being an invisible source of risk and becomes a reliable UX surface.

Teams that invest selectively in motion testing catch fewer surprises in production and have clearer conversations during review. Teams that do not will continue to debug temporal behavior after users notice it first.

Feb 5, 2026