From Visual Correctness to Measurable Brand Intent

Motion in digital products is not decorative. In mature design systems, animation encodes hierarchy, intent, feedback, and brand character. A modal that enters with measured deceleration communicates reassurance. A navigation transition that resolves decisively signals confidence and control.

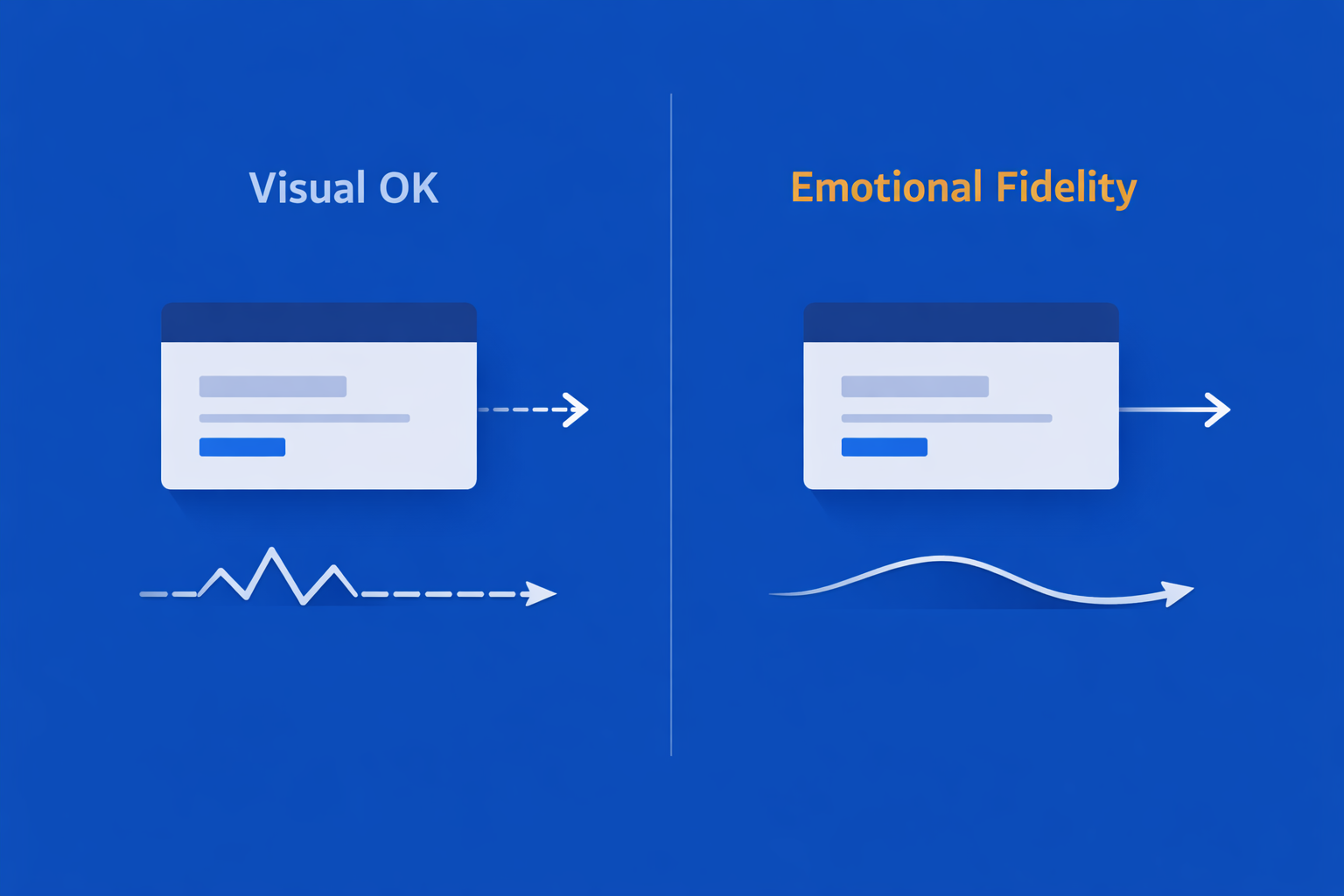

These effects are intentional. Yet in most production pipelines, motion is tested only for visual correctness:

- Does it play?

- Is it smooth?

- Is it broken?

What remains largely untested is emotional fidelity - whether runtime motion behavior consistently expresses intended brand qualities across releases, devices, and performance conditions.

Context: Motion primitives, brand intent, and unavoidable trade-offs

This gap has become increasingly visible. As teams scale motion systems, adopt shared animation tokens, and optimize aggressively for performance, motion behavior drifts. Durations are shortened “to feel faster.” Easing curves are replaced with defaults. Frames are dropped on constrained devices. The animation still functions -but it no longer feels aligned.

Definition

Emotional fidelity is the degree to which runtime motion behavior remains within predefined temporal and easing constraints that are known to produce the intended brand perception under normal and degraded conditions.

This article argues that emotional fidelity can be treated as a testable quality attribute. Not by attempting to quantify emotion itself, but by instrumenting motion primitives, defining measurable constraints, and validating them systematically through QA.

Context: Motion primitives, brand intent, and unavoidable trade-offs

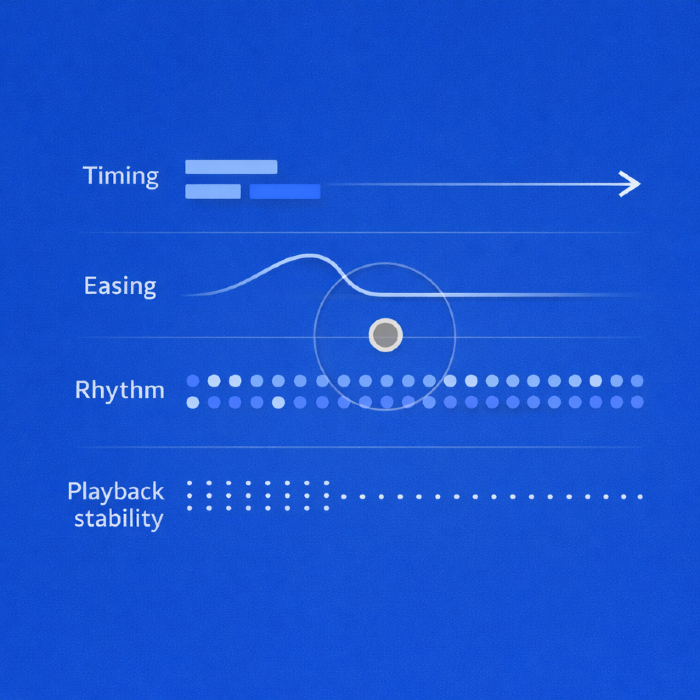

Modern motion systems are composed of a small set of primitives:

- Timing - duration, delay, and sequencing

- Easing - acceleration and deceleration curves

- Rhythm - temporal relationships between elements

- Playback stability - frame consistency under load

Spec Drift vs Runtime Degradation

Design systems typically describe these primitives qualitatively:

“Transitions should feel calm.”

“Feedback should feel immediate.”

Such descriptions are directionally useful but operationally weak. They do not survive implementation detail, nor do they protect against gradual degradation under performance pressure.

Optimization introduces unavoidable trade-offs:

- Reduced durations to meet interaction latency targets

- Simplified easing functions for runtime efficiency

- Frame skipping under CPU or GPU contention

- Motion reduction under accessibility preferences

The problem is not optimization itself. The problem is unbounded optimization — changes made without constraints that preserve emotional intent.

Spec drift vs runtime degradation

Two distinct failure modes must be separated:

- Spec drift - intentional changes that gradually erode motion character (shortened durations, replaced easings).

- Runtime degradation - unintentional changes caused by performance pressure (dropped frames, delayed starts).

Implementation: Instrumenting Motion for Objective Measurement

Emotional fidelity QA must detect both - and treat them differently.

Testing emotional fidelity requires observing motion beyond pixels. This demands lightweight instrumentation that captures how animations behave at runtime.

Timing metrics

For each animated interaction, capture:

- Intended duration (from motion tokens or spec)

- Actual runtime duration

- Deviation percentage

Example:

Expected: 320ms

Actual: 240ms

Deviation: −25%

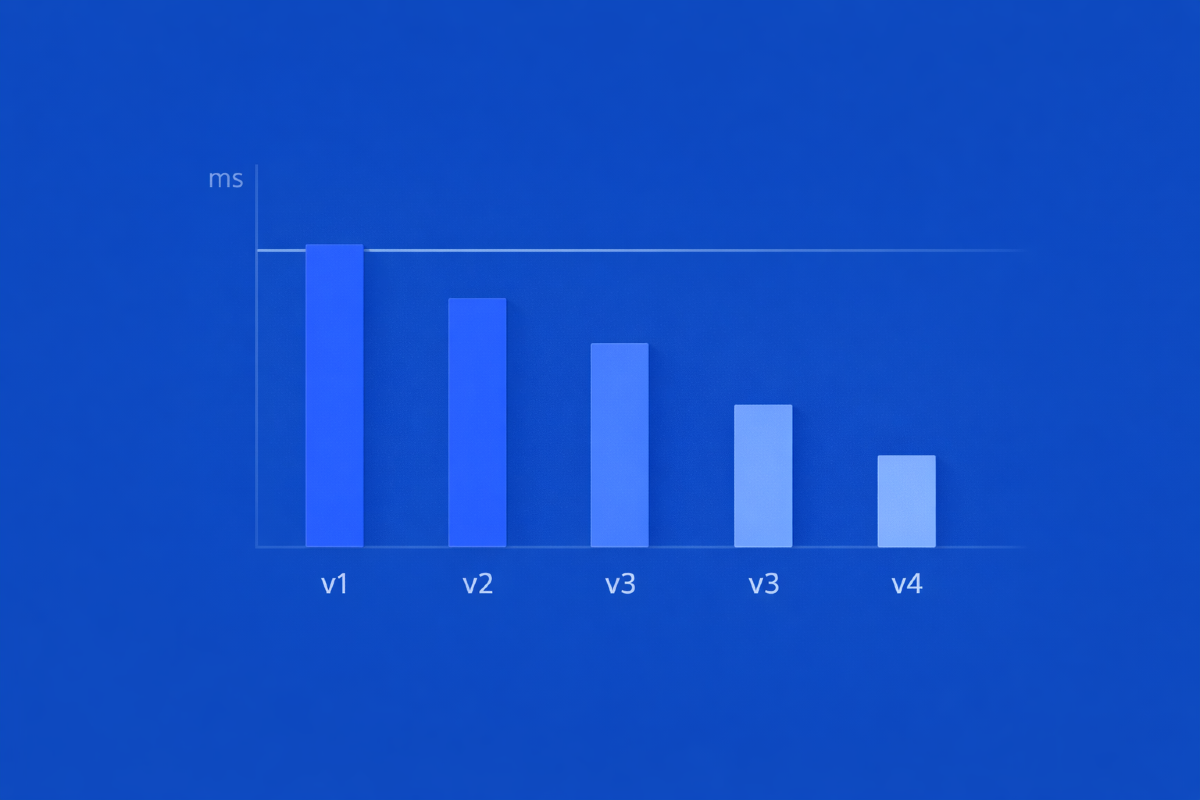

Single deviations are rarely meaningful. Trends across builds are.

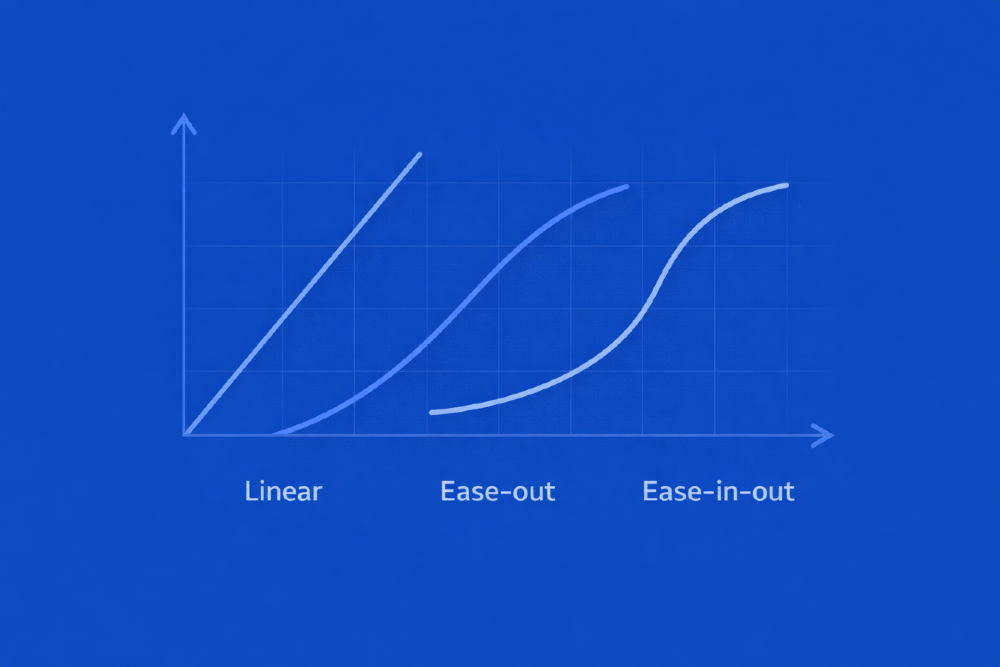

Easing classification

Easing functions encode emotional character more strongly than duration alone. A linear transition communicates mechanical efficiency; an ease-out communicates control and composure.

Instrumentation should:

- Capture the easing function used

- Normalize it into a classified category

- Validate it against allowed profiles per interaction type

This enables explicit rules, such as:

- Primary navigation must not use linear easing.

- Feedback animations may accelerate but must not overshoot.

Rhythm and sequencing

Perceived smoothness is shaped by relative timing, not absolute values. A tight stagger helps related elements feel coordinated and hierarchical. A wider stagger can make the same sequence feel delayed and disconnected.

What to observe in the example:

- Start offsets between related animations.

- Overlap ratios.

- Sequential vs parallel execution patterns.

Validate these against documented ranges rather than fixed values to preserve flexibility without inconsistency.

Playback stability

Frame consistency directly affects emotional tone. Dropped frames introduce tension, even if duration remains nominal.

Track:

- Average frame rate during animation

- Frame drop count

- Longest frame gap

This is especially critical on mid-range devices, where emotional degradation appears first.

Mapping Metrics to Brand Intent

Metrics are only useful when interpreted. Emotional fidelity testing requires an explicit mapping between measured motion behavior and intended brand qualities.

This mapping should be authored collaboratively by design, motion, and QA.

Mapping Brand Attributes to Motion Constraints

| Brand Attribute | Motion Constraint |

|---|---|

| Calm | Duration ≥ 280ms, no linear easing |

| Confident | Ease-out dominant, no overshoot |

| Responsive | Feedback ≤ 120ms |

| Premium | ≤ 2 dropped frames per animation |

Constraints should be expressed as ranges, not absolutes. Emotional consistency is preserved through tolerance, not rigidity.

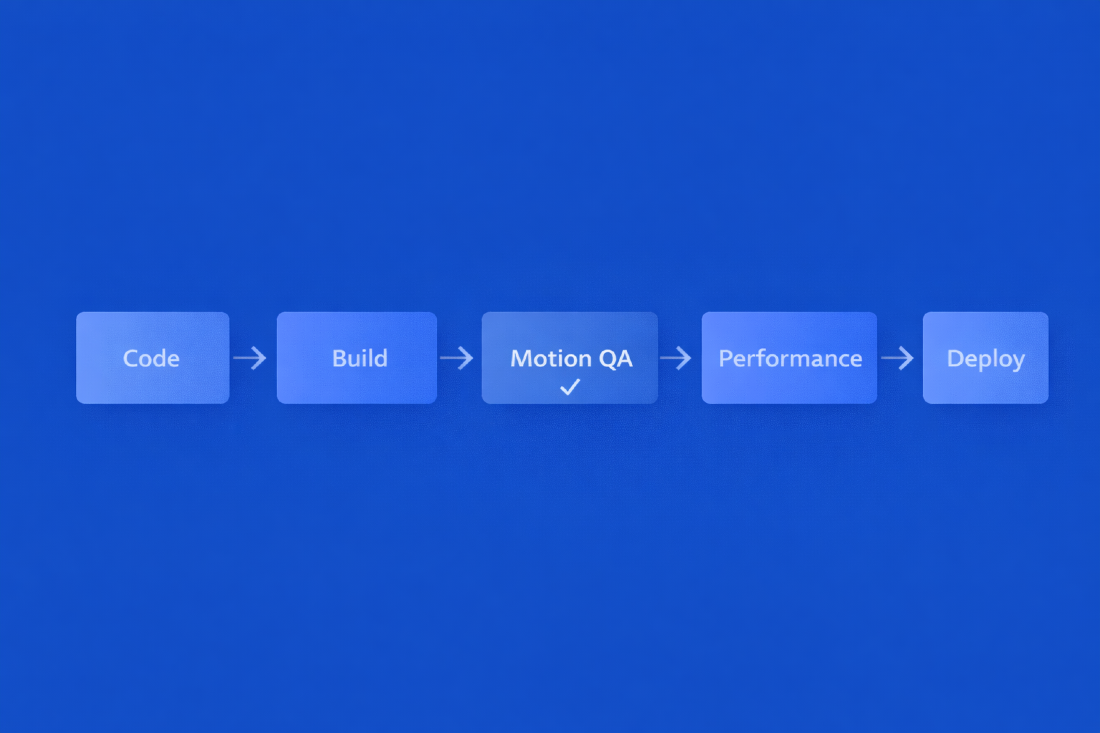

Testing and QA: Enforcement, Not Suggestion

Automated checks

Once instrumented, motion metrics can be enforced through automated QA:

- Component-level tests

- Runtime instrumentation tests

- Regression detection across builds

Why User Testing Is Insufficient

Motion checks belong in CI alongside performance budgets. Motion is not separate from UX performance - it is part of it.

Hard failure rule

Any interaction classified as “calm” that executes with linear easing is considered a failure, regardless of duration or frame stability.

Human validation protocols

Automation cannot judge perception. Human validation remains necessary - but must be constrained.

Effective protocol:

- Short, focused motion reviews

- Evaluation against explicit brand attributes

- Mandatory review under degraded conditions

Reviewers should not debate aesthetics. Their role is to confirm whether measured behavior aligns with intended perception.

Why user testing is insufficient

User testing detects outcomes, not causes. Motion degradation accumulates gradually and rarely triggers explicit feedback. Emotional fidelity often fails silently, only becoming visible after brand perception has shifted.

QA exists to prevent silent failure modes. Motion is one of them.

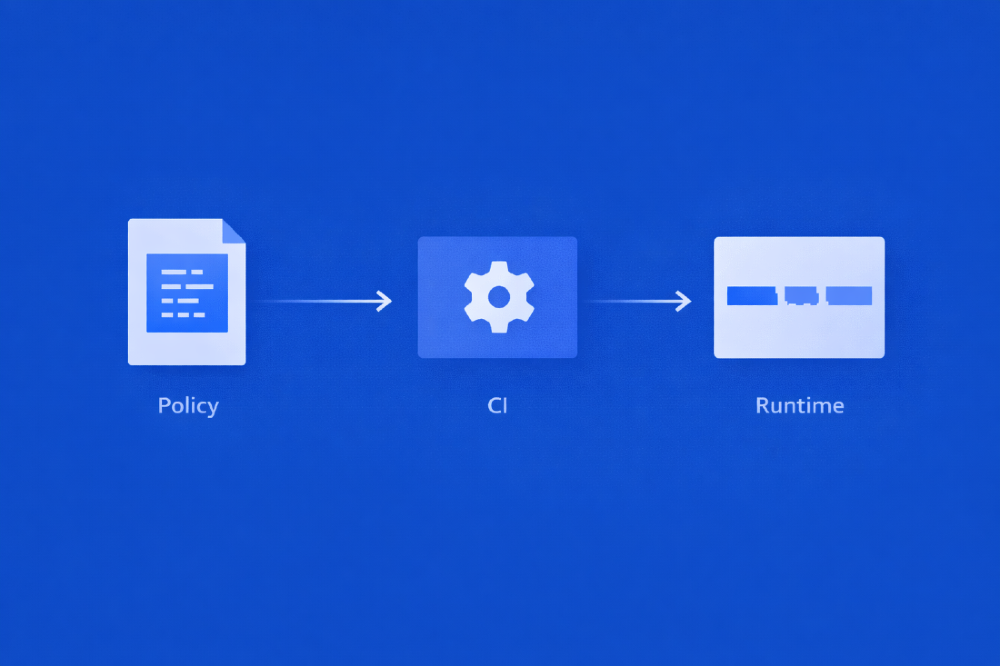

Motion QA Policy (Minimal)

motion_quality_policy:

navigation_transition:

duration_ms: [280, 420]

easing: ["ease-out", "ease-in-out"]

max_frame_drops: 2

feedback_animation:

duration_ms: [80, 140]

easing: ["ease-out"]

allow_linear: false

global:

max_duration_deviation_pct: 20

report_on_regression_only: true

Conclusion

This policy is intentionally minimal. Its purpose is constraint, not prescription.

Emotional fidelity in motion is not subjective intuition. It is an emergent property of timing, easing, rhythm, and playback stability - all measurable.

Teams that do not test motion explicitly still test it implicitly: through inconsistency, brand dilution, and degraded experiences on constrained devices.

The goal is not rigid animation, but controlled expressiveness - motion that remains emotionally consistent as products evolve, teams scale, and constraints change.

Motion is already part of the system. It should be part of quality.

Jan 27, 2026