Introduction: QA Without Behavior Is QA Without Vision

For decades, companies have tested “against requirements.” Checklists, regression suites, edge cases. But the market reality is different: UX has become mainstream. Users expect seamless experiences - not just the absence of bugs.

The paradox is simple: a product can fully meet the specification and still fail with real users. Why? Because QA rarely reflects actual user behavior.

Behavior-led QA changes this dynamic. It’s not another buzzword methodology. It’s a shift in QA’s role - from guardian of checklists to advocate for user experience.

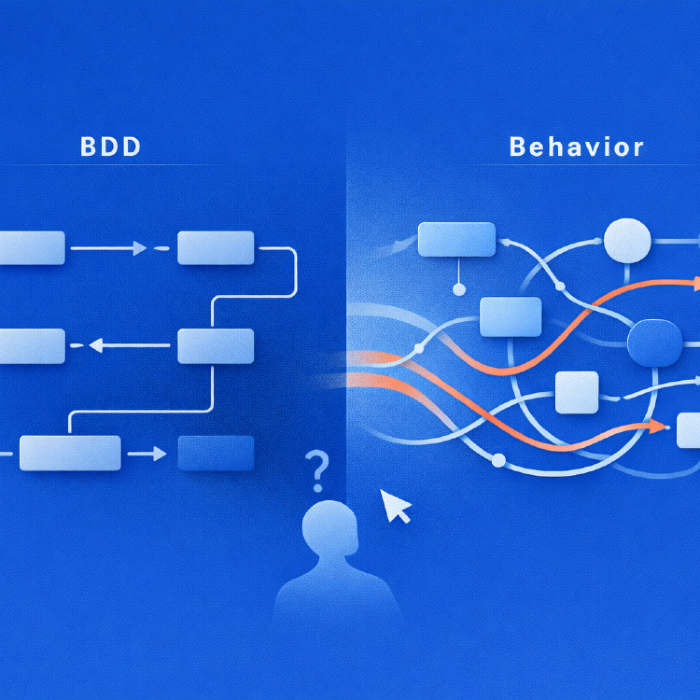

What Is Behavior-led QA and How It Differs From BDD

BDD (Behavior-Driven Development) is a mature practice that aligns business requirements and code. Its “behavior” is formalized user stories written before release - assumptions about how users should behave.

Behavior-led QA is different:

BDD - hypothetical behavior (scenarios in Gherkin)

Behavior-led QA - actual behavior (captured from analytics and session replay)

The distinction is fundamental.

BDD tests “how it should be.” Behavior-led QA tests “how it actually is.”

Collecting User Flow Data

QA without data is QA in the dark. To build tests on behavior, you need reliable sources:

Product analytics (Mixpanel, Amplitude, Heap). They reveal real flows: how users reach checkout, or where they abandon registration.

Session replay (FullStory, Hotjar, LogRocket). Live recordings - was the cursor wandering for 10 seconds around a button? That’s a signal.

Server logs. Dropped requests, timeouts, interrupted sessions.

Feedback channels. Support tickets, in-app surveys - verbal traces of behavior.

QA’s task is to transform these inputs into test cases. Not “we assume users click Sign up,” but “70% of new users choose Google SSO, and that’s exactly where onboarding fails.”

Prioritizing Tests: Popular Journeys vs. Edge Cases

In classical QA, edge cases often dominate. Behavior-led QA flips the model:

Popular paths. The most frequent customer journeys form the regression core. Checkout, onboarding, balance checks - these require zero tolerance for defects.

Edge cases. Still important, but with lower weight.

The middle layer. Flows like password reset are tested regularly but do not compete with the critical journeys.

The result: QA stops being a random sample of scenarios and becomes a faithful mirror of user reality.

Case Studies and Numbers

To protect client confidentiality, the following examples are based on real engagement patterns and outcomes, but company names and identifying details are omitted under NDA.

Before diving into tools and theory, real-world examples show how behavior-led QA changes outcomes.

E-commerce Checkout: QA tested “cart → payment.” Data showed 40% of users hit “Buy Now” directly. A bug in Apple Pay was fixed, raising checkout conversion by +6.5%.

SaaS Onboarding: QA focused on email registration, while analytics revealed 70% of users logged in via Google SSO. Fixing redirect delays reduced drop-off from 22% to 11%.

Mobile Banking: Regression focused on features, but 60% of sessions were “check balance + transaction history.” A discovered API bug prevented a potential outage for 200k users.

The insight: defects often break not edge cases, but the most popular paths.

Linking QA and Product Analytics

Behavior-led QA cannot exist without strong analytics. Their synergy creates direct business value:

QA derives scenarios from analytics. Drop-off points automatically feed into regression.

QA feeds insights back. When regression confirms a bug at a drop-off point, analytics quantifies the business loss.

Shared dashboards. QA metrics integrated into Amplitude/Mixpanel show product leaders not just “a test failed,” but “a test failed in a journey that drives 40% of revenue.”

Behavior Testing Tools

Behavior-led QA leverages existing ecosystems:

| Category | Tool | Tool link |

|---|---|---|

| Commercial analytics | Mixpanel | mixpanel.com |

| Commercial analytics | Amplitude | amplitude.com |

| Commercial analytics | Heap | heap.io |

| Open-source analytics | PostHog | posthog.com |

| Session replay | FullStory | fullstory.com |

| Session replay | Hotjar | hotjar.com |

| Session replay | LogRocket | logrocket.com |

| Test case management | Qase | qase.io |

| Test case management | TestRail | testrail.com |

| Automation | n8n | n8n.io |

| Automation | Zapier | zapier.com |

| AI for scenario generation | GPT (ChatGPT) | chatgpt.com |

| AI for scenario generation | Mistral | mistral.ai |

The point is not which solution you choose, but that QA gains access to real behavior, not hypotheses.

The Role of AI and the Privacy Question

AI is a catalyst for Behavior-led QA:

Automated test generation. LLMs can be trained on logs and replays to build scenarios reflecting actual paths.

Data classification. AI can split flows into “popular” and “rare,” setting regression priorities automatically.

Vision-based replay analysis. Computer vision models can detect “frustration patterns” - chaotic cursor movement, repeated failed clicks.

But privacy cannot be ignored:

Behavioral data collection must comply with GDPR and CCPA.

QA teams should work with aggregated, anonymized datasets.

Session replay must not become surveillance.

AI should process sanitized logs, not raw user data.

Behavior-led QA is not about monitoring users. It is about protecting their experience.

Driven Testing Industry Applications

Behavior-led QA is not limited to SaaS or e-commerce:

E-commerce. Test “Buy Now” - used by 40% of shoppers. A bug here equals direct revenue loss.

SaaS. Prioritize Google SSO onboarding, not just email registration.

Fintech. Regression built on balance and transaction history. Errors here trigger NPS collapse.

Gaming. QA covers in-game purchase journeys - drop-off directly hits ARPU.

Healthcare. Booking an appointment online outweighs rare edge cases.

Testing Limitations of the Approach

Data gaps. Adblock, GDPR, mobile restrictions create blind spots.

Noise. Cursor movement does not always equal intent.

Edge cases remain. Still essential for security and stability.

Organizational barriers. QA often lacks analytics access.

Behavior-led QA is not a silver bullet. It’s a shift in prioritization.

Why Driven Testing Matters Now

Business: UX friction costs more revenue than rare crashes.

Users: They forgive minor bugs, but not broken journeys.

Teams: QA gains strategic value instead of being a service function.

Conclusion

Behavior-led QA is the shift from checking requirements to safeguarding experience.

Today, the tester is no longer just a guardian of checklists - but an advocate for user behavior.

BDD taught us to test “how it should be.” Behavior-led QA forces us to test “how it actually is.”

Behavior-led QA is how we test the business experience itself.

Feb 10, 2026