Architecting the MVP

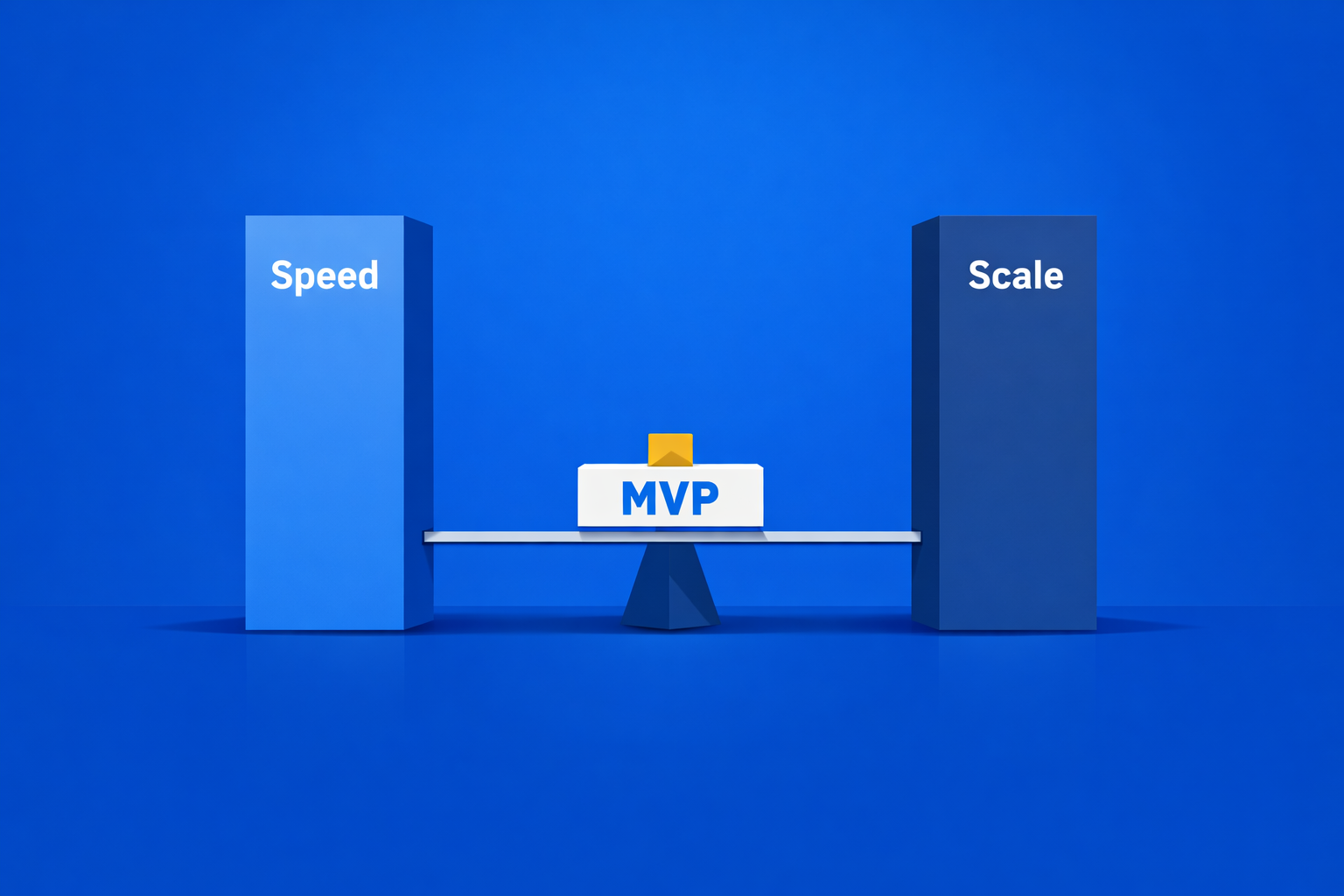

The Minimum Viable Product (MVP) is the crucible of startup engineering. It demands ruthless prioritization, often forcing a direct conflict between speed - getting to market quickly for validation - and scale - building a system that won't immediately collapse under success.

In practice, the goal for product teams is learning. For us as engineers, the goal is slightly more nuanced: deliver the fastest possible learning vehicle without incurring crippling technical debt that necessitates a complete, painful rewrite the moment you get featured on Product Hunt. This deep dive explores the engineering mindset required to navigate this conflict, identifying the critical architectural decisions where investing in scale now prevents catastrophic refactoring debt later.

The Trap of Premature Scaling

The primary goal of an MVP is market validation. Until you have verified that customers will actually pay for and use your product, anything you build for future scale is a waste of time and money. This is the core principle of YAGNI (You Aren't Gonna Need It). A common setup we see is a three-person team spending two months setting up a multi-region Kubernetes cluster for an app that currently has zero users. Premature scaling is costly, primarily due to unnecessary complexity and operational overhead.

When I Vehemently Choose Speed (The First 6 Months)

In the initial stages, I recommend choosing speed over scale in these areas:

1. Microservices: Do not start with a distributed system. The operational complexity of service discovery, distributed tracing, network latency, and infrastructure management (Kubernetes/ECS) will crush a small team’s velocity. A simple, modular monolith deployed to a single server or container is inherently faster to develop and debug.

2. Over-engineered Caching: Resist the urge to implement Redis or Memcached unless you have actual, proven performance bottlenecks that impact the user experience. Simple HTTP caching headers (CDN) or in-memory caching will suffice until real load demands more.

3. Advanced Database Sharding: If your data can fit on a single, powerful database instance (which is true for 99% of MVPs), do not attempt to shard your database from day one. Sharding is a high-cost, high-complexity problem to be solved only when necessitated by massive scale.

The Lesson: If a component’s complexity increases development time by more than 20% but doesn’t directly enable the core user journey or market learning, defer it.

The Critical Hotspots: When to Choose Scale Now

While most complexity should be deferred, there are certain foundational architectural components that have an exponentially high cost of change (or "refactoring debt") once they are baked in. These are the areas where I believe a strategic investment in scale now saves you months of pain later.

- Data Model and Database Backbone The data layer is the DNA of your application. Changing the core structure later is the most expensive type of technical debt.

In practice, teams usually hit this wall when they realize their "quick and dirty" NoSQL schema makes it impossible to run the complex relational queries their new "Analytics Dashboard" feature requires.

The Investment: Choose a flexible, robust database that can handle growth and unexpected data types. A good example is PostgreSQL.

Why Scale Wins: PostgreSQL offers the maturity of a relational database (transactions, integrity) combined with the flexibility of NoSQL via its powerful JSONB data type. You can structure your core entities relationally while accommodating rapidly changing feature requirements (unstructured data) in JSONB columns. This lets you build fast without being boxed into rigid schemas. Rebuilding the entire data model or migrating databases mid-growth can halt a company for months.

The Action: Don't just pick Postgres; ensure your initial data schemas are logically separated and use the best indexing strategies relevant to your core user queries (e.g., index your foreign keys and common search fields).

2. Authentication and Authorization (Security)

Security is a binary state: you are either secure or you are a liability.

The Investment: Never roll your own authentication. Use a well-established, industry-standard service or open-source framework (like Firebase Auth, Auth0, or an OAuth 2.0/OpenID Connect flow).

Why Scale Wins: This isn't about handling 10 million users; it's about handling zero vulnerabilities. Investing in a standard system ensures you automatically benefit from professional security audits, standard token management (JWT/Refresh Tokens), and necessary scaling features (rate limiting, email verification) from day one. Refactoring security protocols, user tables, and password hashing after users have signed up is arguably the most dangerous type of debt.

The Action: Decouple user authentication entirely from your core backend logic. Use middleware to enforce authorization checks based on standard bearer tokens.

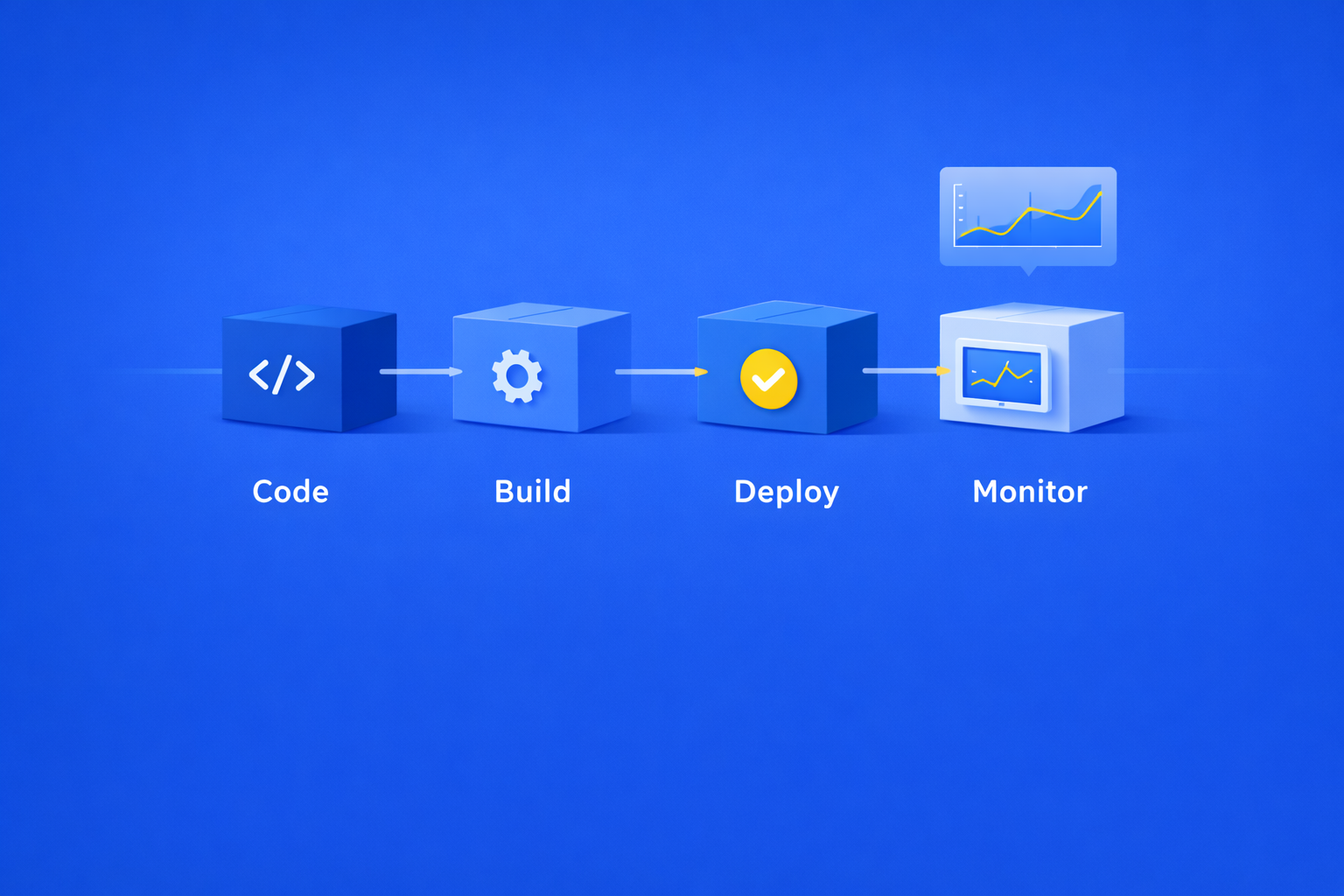

3. Deployment and Observability Pipelines (CI/CD)

A common setup we see is "cowboy coding" where developers SSH into a server to pull git changes manually. This breaks the learning loop.

The Investment: Set up a professional, automated CI/CD pipeline and integrate basic logging and error monitoring (observability).

Why Scale Wins: You are scaling your process, not your infrastructure. An automated pipeline means rapid, repeatable, and low-stress deployments. This reduces risk and allows you to push new features (and bug fixes) multiple times a day. Debugging a system without proper logging and metrics quickly becomes impossible once multiple users are involved.

The Action: Use infrastructure-as-code (like Terraform or CloudFormation) for the bare minimum of resources, and set up a simple GitHub Actions or GitLab CI workflow that deploys your service automatically on merge to main.

The Modular Monolith: The Best of Both Worlds

The ideal architectural pattern for a growing MVP is the Modular Monolith. It allows you to develop quickly today while keeping the door open for microservices tomorrow.

Architectural Comparison

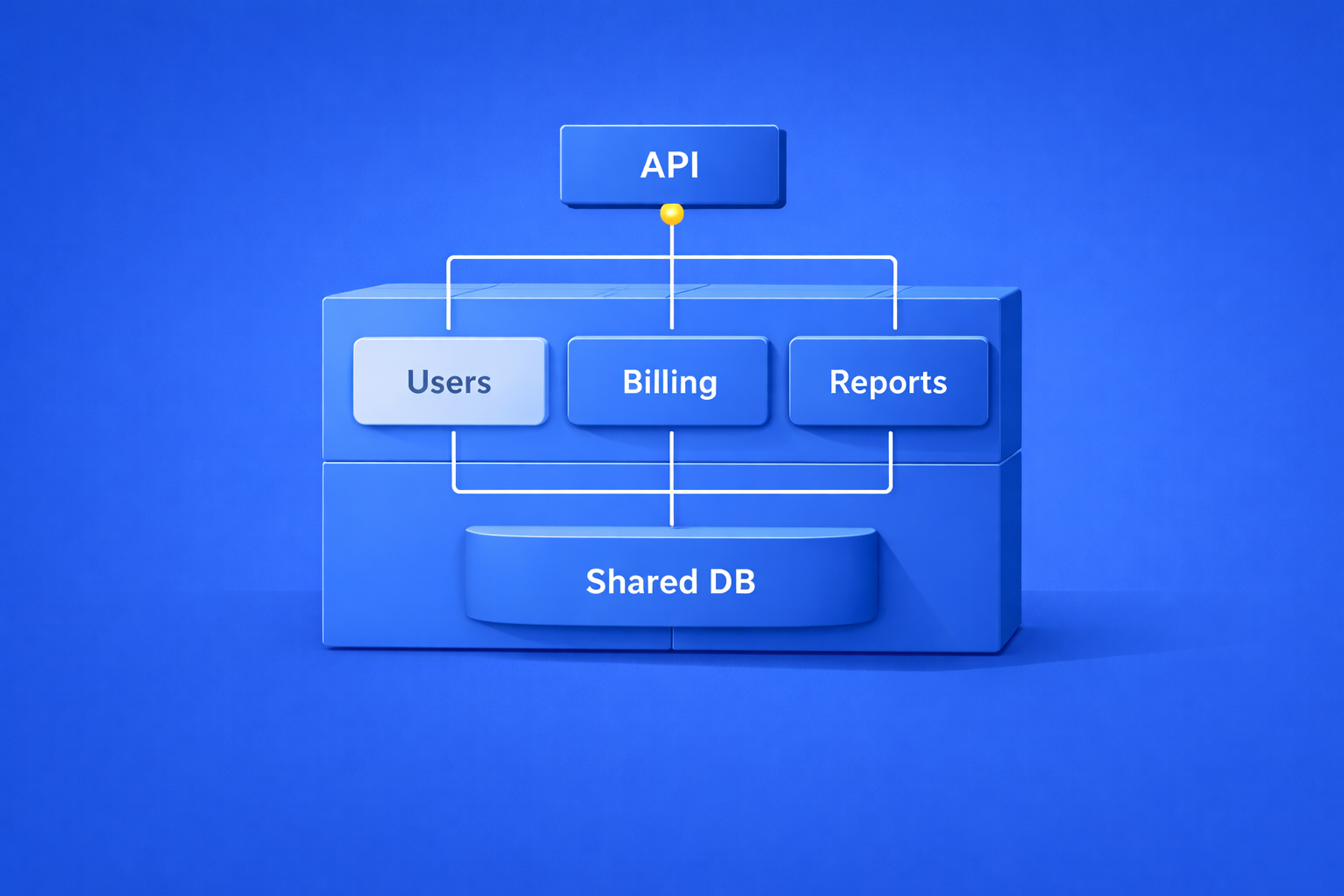

Problem: Changing the "Billing" logic accidentally breaks the "User Profile" logic.

Standard Monolith (The "Big Ball of Mud")

Solution: You deploy one unit, but code is organized so that the "Billing" module only talks to "Users" via a defined internal API.

Modular Monolith (The Goal)

This approach is the perfect balance between speed and scale:

Speed (Monolith) You deploy a single, monolithic application. This means no inter-service communication overhead, no complex service mesh to manage, and simple, unified logging and debugging. Your infrastructure costs are low.

Scale (Microservices Mindset) Internally, your code is structured like a distributed system. Group code into well-defined modules (bounded contexts) with clear public APIs. The User module doesn't directly touch the Invoicing module’s database or internal functions; it interacts through a clean interface.

The Decoupling Strategy

By practicing internal decoupling in a monolith, you are perfectly positioned for future success. When a specific module (e.g., the Notification Service) becomes a major performance bottleneck or requires a completely different tech stack (e.g., moving from Node.js to Go for pure processing speed), you can perform a Strangler Fig Migration:

Step 1: Extract that module into its own new container/service.

Step 2: Update the monolithic code to communicate with the new external service via a network call (HTTP/gRPC/RPC).

Step 3: The rest of the monolith continues running unchanged.

This approach allows you to evolve your architecture when and where performance demands it, without the painful upfront investment required by a full microservices architecture.

Conclusion

Architecting an MVP is less about predicting the future and more about mitigating the most expensive risks. Always prioritize speed for feature development and market testing. However, be ruthless in identifying the three foundational components - the Data Model, Authentication, and the CI/CD Pipeline - where initial investment prevents catastrophic debt. By starting with a modular monolith and building solid, decoupled foundations, you create a system that is fast to launch, cheap to operate, and perfectly positioned to decompose and scale when the market finally validates your product.

Feb 3, 2026